From Fear to Implementation: A Zurich Roundtable on Enterprise AI in 2026

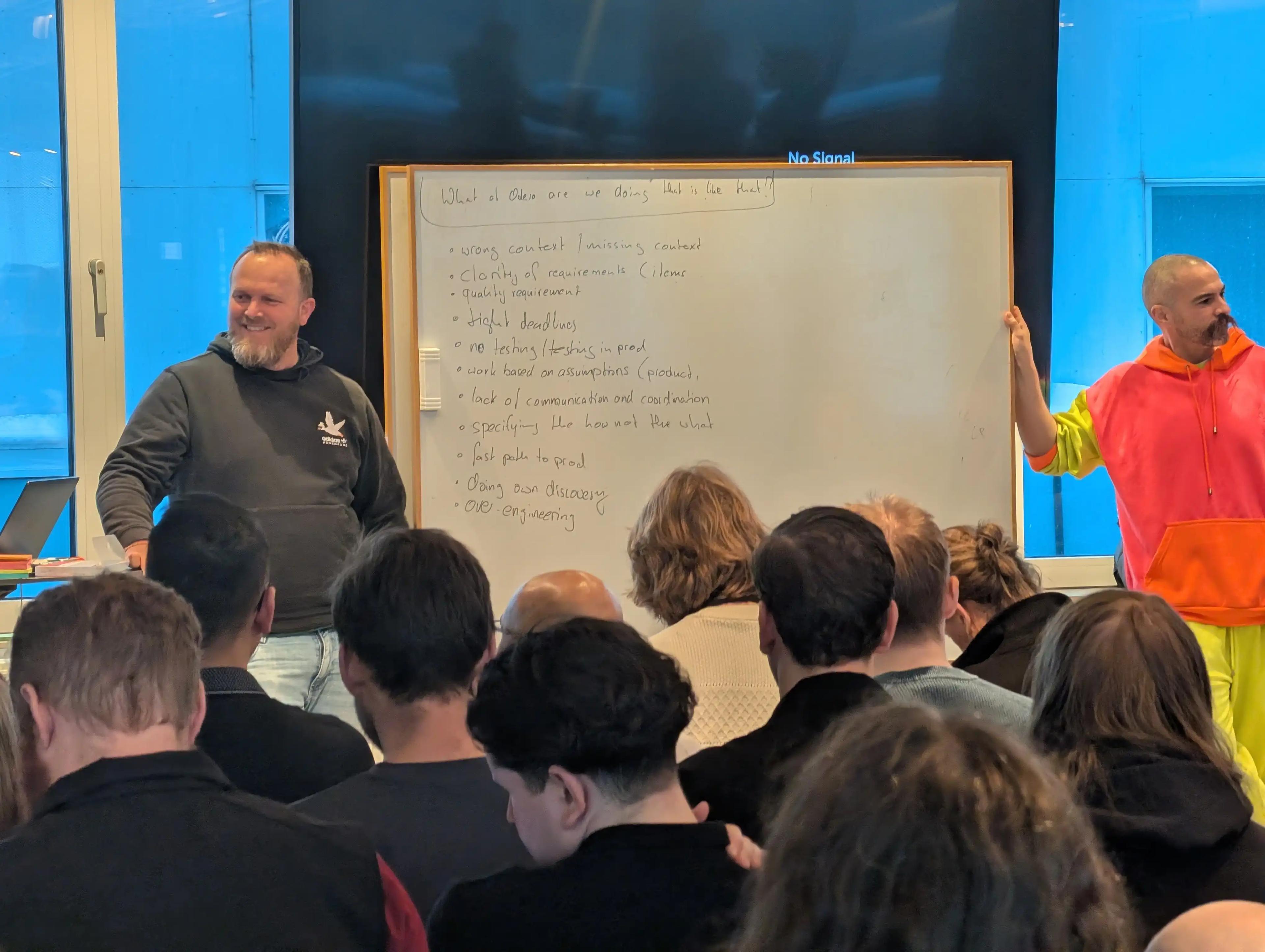

We host senior leaders roundtables regularly across Western Europe. Each group is small and hand-picked, with an even level of seniority across the table. The format is a facilitated roundtable: our team keeps the debate on topic and makes sure everyone gets to speak. At that level of seniority, in a room that size, the conversation tends to go to places it wouldn't in a larger or more public setting. What follows is an executive summary of the Zurich edition, held on March 12, 2026.

Who Was in the Room

- A data and AI strategy lead at a major Swiss financial institution, navigating the gap between what enterprise tools offer and what people actually need

- An AI literacy consultant, helping organisations and individuals upskill in practice rather than just acquire licenses

- A managing partner at a legal tech firm, focused on digital trust, identity infrastructure, and AI governance with governments and private sector clients

- An AI transformation lead at a large industrial company, moving POC-grade experiments into enterprise-grade production across generative AI and computer vision

- A founder building an AI-driven risk intelligence platform for institutional investors in digital assets, with a background in knowledge graphs and distributed systems

- An IT infrastructure and platform engineering lead at a financial institution, managing service onboarding speed and the security review process for a technology that moves faster than either

The Conversation Has Shifted

A year ago, roundtables like this spent considerable time establishing whether AI was credible and whether the fear around it was justified. This one opened on operating models, agent governance, and what development organisations look like when sprint backlogs empty in days.

For senior practitioners running these problems directly, the debate has moved from fear to implementation. That shift doesn't mean fear is gone outside rooms like this — one participant made that point directly. But the questions at this table had moved, and the conversation followed.

The SaaS Question

One thread produced sharp disagreement: what some investors are calling the SaaS Armageddon.

SaaS companies collectively lost significant market cap last year. The investor logic: companies that demonstrate they're benefiting from AI get re-rated upward; companies that can't demonstrate that benefit face a compressed earnings window — from a projected 20 years down to around 7. The driver is the erosion of software complexity as a competitive barrier. Building a CRM or a booking platform used to require years of engineering investment. A small team with AI coding tools can now build a functional equivalent in weeks.

One participant's example: within three months of an AI adoption push at a real estate software company, two or three new competitors appeared — companies that clearly existed for only months — with platforms functionally comparable in the key areas of that market.

On the other side: the value of companies like HubSpot and Booking.com lives in client relationships, accumulated data, and operational trust built over years. None of that gets cheaper to build because coding got faster. Where the moat lives determines who survives the shift.

Vibe Coding

Two examples from the room, both from people with direct experience:

The upside: A mobile app stuck in planning for 18 months. A single developer built and shipped it to both app stores in three days.

The other side: Developers generating enough AI-produced code that reviewing it becomes cognitively impossible. The ceiling on what humans can verify per day is fixed. What AI can generate per day is not.

One enterprise approach that came up as credible: treat all vibe-coded tools as proof of concept by default. No production deployments without evaluation — does it address the problem it was built for, and what would it cost to make it production-grade? For most enterprise use cases, the value of vibe coding lies in the discovery process: surfacing what people need before anyone commits to building it properly.

The Agent Definition Problem

"We want agents" is now a standard enterprise request. The problem is it means different things to different people in the same room, and that terminological confusion produces misdirection that costs real budget.

A taxonomy that resonated across the group:

| Category | How it works |

|---|---|

| AI Tool | Works while you're actively using it, stops when you aren't. Most current IDE copilots sit here. |

| AI Assistant | Operates within parameters you define, returns outputs for review. Human sets scope; system executes. |

| AI Agent | Fully autonomous within defined boundaries. Escalates when blocked, holds credentials, makes decisions within set limits. |

Most of what gets called "agentic AI" in enterprise contexts today is the second category. Starting with governed assistants is where organisations build the track record they need before expanding scope.

The accountability question followed directly: when an agent makes a consequential mistake, who is responsible? One participant from financial services described using an impact-and-probability matrix — high potential impact or high error probability keeps humans in the loop; lower on both dimensions, more autonomy is appropriate.

AI Literacy

Enterprises are distributing licenses and leaving employees to figure out the rest. One example: an employee at a large company that deployed Copilot attended one training session, found it didn't match the tool they had access to in their corporate environment, and is now paying for individual support out of pocket.

The gap between consumer AI tools and enterprise-managed versions is widening. People who use Claude or ChatGPT personally and then open Copilot at work feel the difference. That gap has morale and retention implications most IT leaders are not accounting for.

The rough estimate from practitioners doing this training work: with proper education and the right tools, around 80–90% of a technical team adapts successfully. The harder question — what happens to those who don't — didn't have a clean answer. In Zurich, one participant estimated 3,000 to 10,000 banking roles are at risk. Some are already receiving redundancy notices. The retraining adoption curve is slower than the displacement curve.

The Future of the Developer

The "10x faster means 10x fewer" framing was rejected by most of the room.

Faster execution shifts the bottleneck to specification. If engineering output accelerates, the constraint becomes how clearly intent is defined before the system builds. Product owners, and the quality of the specifications they produce, become the limiting factor. Organisations where requirements definition was already the slow part will feel that constraint faster than they expect.

Two groups emerge more clearly under these conditions:

- Senior developers who can define intent, set architectural direction, and review outputs at the system level become more valuable

- Junior developers who relied on writing and struggling through code to build comprehension face a harder path — that friction is where the understanding used to come from

One additional nuance the room raised: the human brain doesn't scale at the same rate as the tools. Managing AI-generated output at 10x volume is a different problem from generating it.

What This Conversation Tells Us

The questions at this table had moved on from whether AI is real. The room was working through governance structures, operating models, and what organisations look like under fundamentally different conditions. Those are harder questions, and they're what re:cinq builds these events around.

re:cinq runs senior leaders roundtables regularly across Western Europe — curated, peer-level conversations for people working through AI transformation at an executive level. If you're navigating these questions and want to be considered for the next one, reach out at re-cinq.com.

Table of Contents

Who Was in the Room

The Conversation Has Shifted

The SaaS Question

Vibe Coding

The Agent Definition Problem

AI Literacy

The Future of the Developer

What This Conversation Tells Us

Continue Exploring

You Might Also Like

A Pattern Language for Transformation

Browse our interactive library of 119 transformation patterns. Each one describes a specific architectural problem and a tested way to solve it, so your team can talk about real tradeoffs instead of abstract ideas.