Agents, Correctness, and the Development Process That No Longer Fits: A London Roundtable on Enterprise AI

By Pini Reznik

We host senior leaders roundtables regularly across Western Europe. Each group is small and hand-picked, with an even level of seniority across the table. The format is a facilitated roundtable: our team keeps the debate on topic and makes sure everyone gets to speak. At that level of seniority, in a room that size, the conversation tends to go to places it wouldn't in a larger or more public setting. What follows is an executive summary of the London edition, held on February 26, 2026.

Who Was in the Room

- An AI product consultant with 20 years of experience, focused on aligning leadership and workforce with AI adoption and building experimentation frameworks for AI-native ways of working

- An AI/ML researcher and innovation lead in pharmaceutical drug discovery, running applied research on protein folding, large language models for biotech, and AI-assisted R&D pipelines

- Lead technical architect at an industrial materials trading and e-commerce platform, with a background in building large-scale B2B and marketplace architectures

- Engineering lead at a pan-African fintech and payments company, focused on orchestration architecture and AI systems integration

- Product leader at a work management SaaS platform serving enterprise clients

- Data and AI community leader, focused on accessibility and diversity in AI adoption across organisations

- Technology professional with a background in legal and financial services publishing, joining re:cinq to focus on AI product and community

- CTO-level advisor running a combined hardware and software IoT team, focused on identifying where AI creates value and where current claims break with reality

The Problem of Defining "Good"

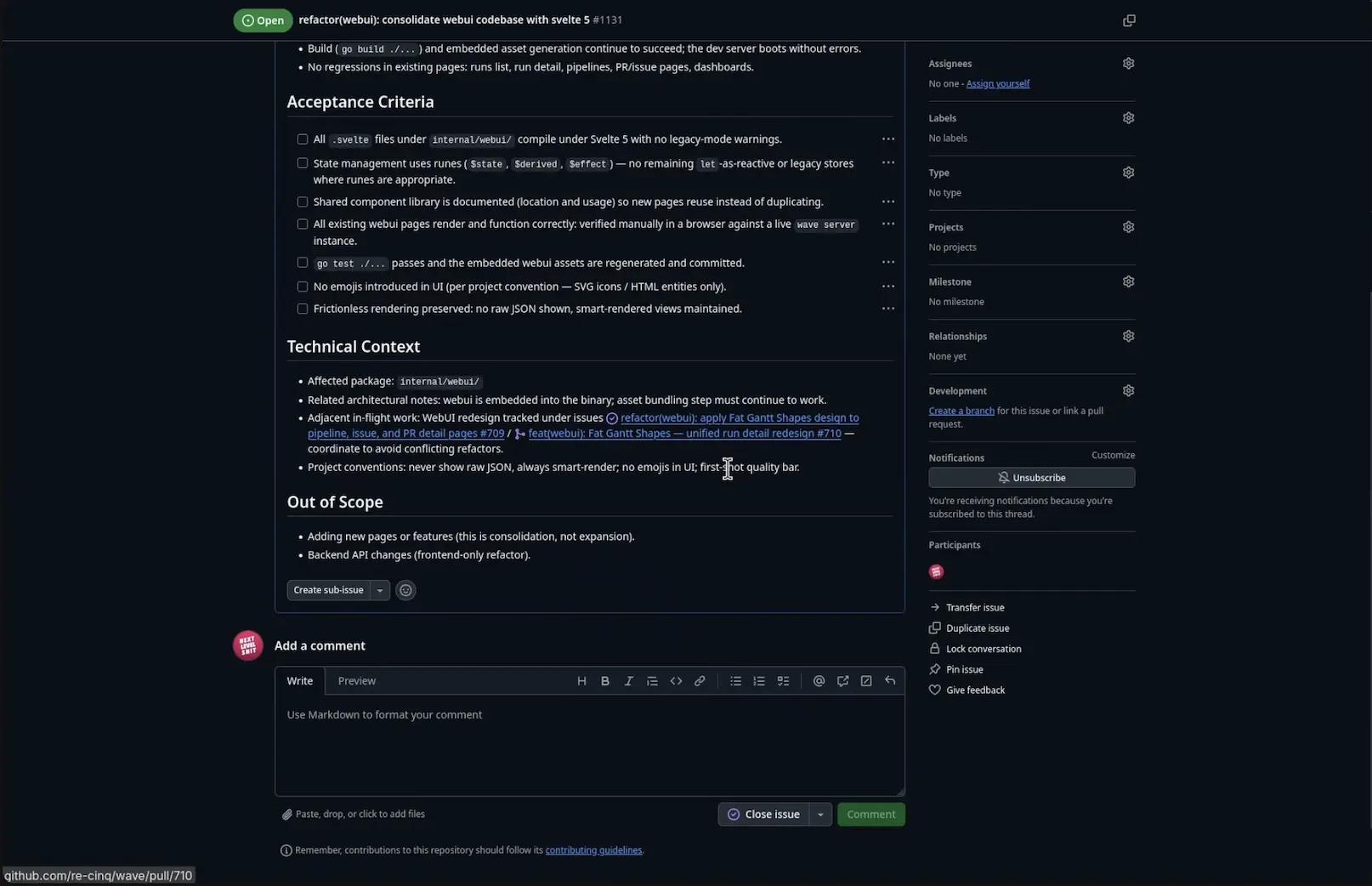

The session opened on something that sounds simple: how do you test AI output?

The group's position: testing is the hardest part of building with AI because it requires defining correctness first, and correctness for AI outputs is often not binary. The standard benchmarks — SWE-bench being the most widely cited — have significant problems. Studies have found that a substantial portion of the tests measure the wrong behavior. More fundamentally: almost all benchmark tests are embedded in model training data. Models can recall the correct answers from training rather than solving the problem. "We are doing to LLMs what we do to humans — training to pass the test instead of training to think."

This has a direct practical consequence. Vendor model comparisons built on these benchmarks carry less weight than most practitioners assume, and procurement decisions based on them can be misleading.

One participant pushed the question further: a CFO agent can give a wrong answer, and so can a human CFO. Why are we holding AI to a standard of infallibility at this stage, when we've never held humans to it? The question was about what "production-ready" means in a domain where determinism was always partially illusory. Traditional software has bugs. Cloud infrastructure design shifted toward accepting that some things will fail and built observability and fast reaction capability instead. AI systems may require the same mental model: defined error rates, fast detection, and recovery.

The counterargument was also in the room. The UK Post Office scandal came up — an IT system deployed in the 1990s that produced incorrect accounting records for decades and led to the wrongful prosecution of hundreds of subpostmasters. Accepting error rates in high-stakes or regulated contexts isn't a theoretical risk. The dynamic equilibrium model is plausible for some applications, but it can't be applied uniformly. No clean consensus emerged.

Agent Orchestration: The Coordination Problem

The group discussed a direct contradiction in published research on multi-agent systems.

A Stanford study from January 2026 found that two agents given a shared task with minimal orchestration were 50% less likely to complete it correctly than a single agent. The failure mode: one agent would say it was going to handle a task, the other would accept that and stand down, and then the first agent would fail to do it. The group's observation: this is exactly how poorly coordinated human teams fail.

Anthropic's published results from an earlier period showed the opposite — Opus paired with a swarm of Sonnet instances produced better outcomes than either alone. The difference the group identified was orchestration quality. The Stanford setup had essentially none — no shared plan, no validation of whether agents had completed what they claimed. Swarm performance depends on the quality of the management layer above the agents. If an agent didn't do what it said it would, something in the system has to hold it accountable.

A counterpoint from someone running an enterprise AI platform on-premise: large customers with significant GPU infrastructure prefer fewer large models over swarms of smaller ones, because the range of tasks their employees use AI for is too diverse to optimize a model roster in advance. With thousands of employees using AI for different purposes, you can't pre-select the right model for each task type. Swarm architectures suit cases where the task space is defined and constrained; large general models are more practical when it isn't.

A third pattern held in a pharma context: domain-specific expert models for narrow tasks, with a general orchestrating model managing the overall pipeline. The mixed setup outperformed any single approach.

A practical finding from testing done in the session: given the same task, a mid-tier model completed it in 25 minutes, a flagship model took 45 minutes (it overthinks), and the fastest/cheapest model took an hour (it makes more mistakes requiring more retries). Published benchmark rankings don't predict that.

How Software Development Is Changing

The existing development process — user stories, sprint planning, sprint review — is running into a structural mismatch with what agentic development looks like in practice.

A VP of Engineering at a large UK telecommunications company went fully agentic in mid-2025 and had to hire more product people because developers were completing stories faster than the product backlog could be maintained. The underlying problem: user stories are written for engineers who can fill in context, infer intent, and ask clarifying questions. Agents can't do that. A standard user story written for a human engineer doesn't contain enough information for an agent to execute correctly without producing something unexpected.

The direction several participants had settled on: spec-driven development, where the complete specification is defined before implementation begins and the agent's job is to bring the codebase into alignment with it. Tools are emerging that link spec, tests, and implementation explicitly — flagging anything in the spec not covered by tests, anything in tests with no corresponding spec, anything in implementation that deviates from either.

One participant had built a fully functional, seven-tab marketing and sales web application in three days using roughly 20 prompts, deployed to production. None of those prompts were user stories in any recognizable sense. The unit of work has changed. The process built around the old unit of work will need to follow.

A more extreme version: software factories where an agent pipeline takes a product requirements document, decomposes it into epics, stories, and tasks, builds everything, and surfaces the output for evaluation. If the output is acceptable, you keep it. If not, you run the factory again. The economics that make this worth the disruption aren't about being twice as fast. They're about being orders of magnitude faster — at which point the question of whether to disrupt the existing process answers itself.

A group at an early-stage startup described a related constraint: their codebase was changing fast enough that written documentation became a liability. Anything written down was outdated within hours. They worked in close physical proximity and communicated verbally. Remote collaboration under those conditions produced wasted effort when someone acted on information that was a few hours old.

Security: The Missing Conversation

At a developer event earlier in the year, practitioners were asked how many of them were running AI coding assistants with full permissions enabled, outside any container. A significant proportion raised their hands. The group's view: that's an elementary security failure, and it's widespread.

The developers most active with AI tooling are often the ones least focused on securing the configuration of those tools. The platforms they're using haven't prioritized it — one major AI framework explicitly stated at launch that security was out of scope for the initial release, with adoption the priority. The result is a large population of AI-assisted development workflows running with minimal guardrails, in environments not designed to contain them.

The gap identified: nobody is building the enterprise security integration layer that would make AI development tools appropriate for regulated environments — SSO, directory services, cross-platform agent identity. The infrastructure work required to make this safe for production is largely unaddressed. One participant named it as a commercial opportunity sitting open.

What This Conversation Tells Us

The questions in the room weren't about whether agents work. They were about correctness models for production systems, failure modes in multi-agent coordination, how to write specifications that agents can act on reliably, and what responsible security architecture for AI-assisted development looks like.

The conversations re:cinq brings these groups together for are getting harder and more specific. That's a reasonable indicator of where the market is.

re:cinq runs senior leaders roundtables regularly across Western Europe — curated, peer-level conversations for people working through AI transformation at an executive level. If you're navigating these questions and want to be considered for the next one, reach out at re-cinq.com.

Table of Contents

Who Was in the Room

The Problem of Defining "Good"

Agent Orchestration: The Coordination Problem

How Software Development Is Changing

Security: The Missing Conversation

What This Conversation Tells Us

Continue Exploring

You Might Also Like

A Pattern Language for Transformation

Browse our interactive library of 119 transformation patterns. Each one describes a specific architectural problem and a tested way to solve it, so your team can talk about real tradeoffs instead of abstract ideas.