From the Prototype to Production: An Amsterdam Roundtable on AI in 2026

By Pini Reznik

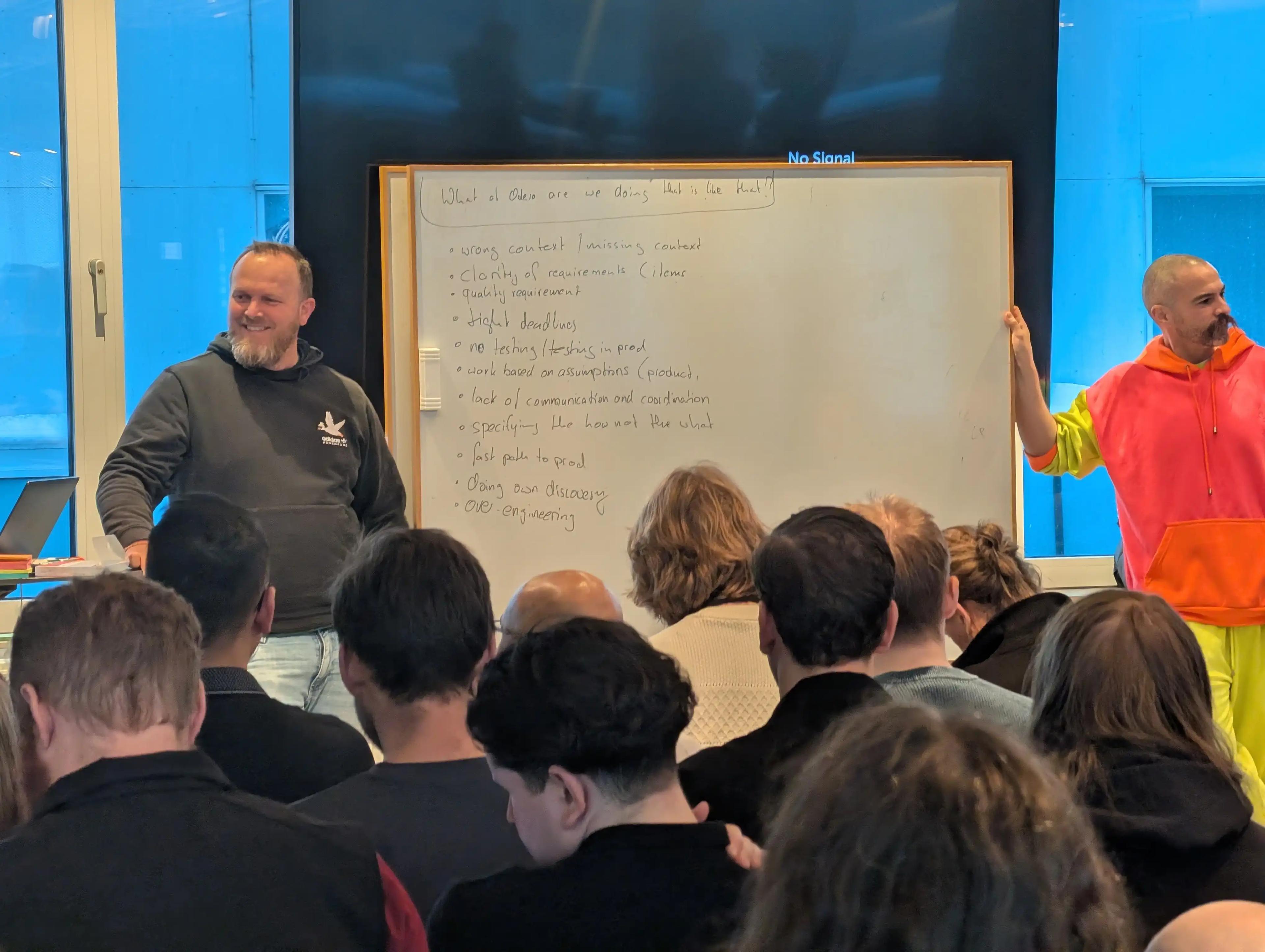

We host senior leaders roundtables regularly across Western Europe. Each group is small and hand-picked, with an even level of seniority across the table. The format is a facilitated roundtable: our team keeps the debate on topic and makes sure everyone gets to speak. At that level of seniority, in a room that size, the conversation tends to go to places it wouldn't in a larger or more public setting. What follows is an executive summary of the Amsterdam edition, held on February 5, 2026.

Who Was in the Room

- Engineering manager at a SaaS learning and content management platform serving enterprise clients across 20+ countries

- Director of AI markets at a European data center and compute infrastructure company

- Lead infrastructure architect at a major European defense and technology group

- Founder building an AI-powered maritime and logistics intelligence platform

- Founder of an AI research and intelligence startup working with institutional investors

- Engineering lead at a climate control and smart building systems company, with large-scale greenhouse energy management running in production

- Engineering or product leader at an online travel comparison platform

- Head of Maritime Data Science at the Dutch Ministry of Defence, running a large-scale digital transformation programme

- Senior engineering manager at a B2B e-commerce platform

- Technology leader at a global payments network

- Business developer focused on China-Europe cross-border trade and market access

- AI project lead at an AI implementation consultancy

What Does "Agent" Actually Mean?

The session opened on a definitional problem: the word "agent" is being applied to things that are quite different from each other.

NVIDIA publicly claims customers are running 37,000 agents. Jensen Huang has predicted 90-billion-plus agents by year-end. The threshold for what counts in those numbers isn't established. One participant's definition: autonomy plus reactivity. Another's: the distinguishing feature is self-correction — if it's conditional logic, it's an expert system. The group landed roughly where the evidence points: most of what enterprises describe as "agentic AI" is governed automation with a human review checkpoint, not autonomous agents operating independently.

A concrete example from the room: at one company, a ticket tagged with a specific label kicks off a workflow that opens a pull request to remove dead experiment code. A person still reviews it before merge. Running 30 or more simultaneous user-facing experiments, dead code cleanup had always been overlooked. The agent handles detection and preparation; the human handles the decision. That sits in the middle tier of the taxonomy — a governed assistant operating within defined scope, with human review before anything commits.

In large enterprises, agent proliferation is already becoming a governance problem. One participant described a company where every department had built its own agents: HR, finance, support. When a reimbursement request comes in, an agent responds. If you dispute it, a human steps in. The structural question — who governs all of this, how you maintain standards across hundreds of agents built by separate teams — has no clean answer yet in most organisations.

Knowing When AI Accelerates You and When It Doesn't

An engineering lead described the challenge his team faces: recognising in advance whether applying AI to a given task will make it faster or slower. His examples were direct. Approving timesheets: AI performs poorly there. Summarizing 300 pages of EU cybersecurity legislation: AI handles it well and saves hours. The challenge is pattern recognition, not technique.

This held across multiple domains. In a radiology startup, human accuracy on medical billing codes — 17,000 possible codes, essentially a lookup problem — runs at 72–75%. A model trained specifically on the task reached 94%. But the larger time problem wasn't billing classification. Radiologists were spending around 11 hours a week writing structured reports while simultaneously examining patients. Redirecting AI toward speech-to-structured-report generation had a larger operational impact than the billing model. Knowing which problem to solve matters as much as knowing how to solve it.

The Citizen Developer Question

A company's CFO — no engineering background — spent a year working with AI tools and is now building internal CRMs and workflow systems, some in a day. The tools aren't production-grade in any enterprise sense, but they work. In combination with a technical lead who can evaluate and extend them, the team shipped internal finance, approval, and workflow tooling without buying SaaS subscriptions.

That drew an immediate parallel to MS Access and Visual Basic in the 1990s, which enabled non-developers to produce code that frequently became unmaintainable. The counter: the people who were building in Excel and Access in the 1990s are now building in real programming environments, and the transition went reasonably well for most of them. Accessibility appears to expand competence more often than it degrades quality.

The structural risk is real though. When citizen developers build tools and hand them to IT for production deployment, the volume of low-quality submissions can overwhelm any review and standardisation process. One participant offered a frame: building a shed in your backyard doesn't require permits; running an apartment building does. Whether enterprises will enforce that distinction is an open question, and several people in the room had opinions about how it would go.

The broader conclusion: the expansion of software creation is going to happen primarily outside development teams — across finance, operations, sales, support. The developer profession will see modest growth in absolute numbers. Software production by people who aren't developers will grow substantially.

Organizational Adoption: The Range

An insurance company whose support team had been growing linearly with headcount received a board mandate: 50% reduction in six months using AI.

A real estate management company with a 100-person development team is now training everyone on AI-assisted development. Their concern is existential. Software they built over years by dozens of engineers can now be functionally replicated in three weeks. The competitive moat built on software complexity is narrowing.

A third pattern: one participant at a large technology company described a decision two years ago to treat commits without AI tool attribution as worth a conversation. After a year, every engineer had been retitled and required to build something with AI. Every six months, the faster movers get promoted.

The bottom-up version also appeared. At one company, AI adoption started with engineering experiments, formalized into sessions where teams demonstrate using AI on real tickets, and is now being systematized across the development lifecycle — mapping which workflow stages are candidates for governed automation and where human checkpoints remain necessary.

The room's observation: adoption driven by mandate without psychological safety tends to produce compliance. The organisations seeing genuine productivity shifts are the ones where experimentation is encouraged and failure is shared alongside success.

Production Access and Trust

One question went around the table: would you give an agent write or delete access to a production database?

Answers ranged from "no, for the same reasons I wouldn't give it to an individual developer without controls" to "yes, with full audit trails, scoped permissions, and rollback capability." The consensus: agents in production are appropriate when they have traceable identities, permissions limited to their specific task, and human-in-the-loop requirements for irreversible actions.

The self-driving car parallel came up: statistically, autonomous vehicles are already safer than human drivers. A single AI-caused accident receives scrutiny that 100,000 human-caused accidents do not, because accountability is unambiguous. That asymmetry shapes adoption resistance in ways that improving AI performance alone doesn't resolve.

The room's counterpoint was a concrete example. Climate control systems in large greenhouse operations already run on AI that manages conditions for an entire crop — a year of growth, committed contracts, and the revenue depending on it. Those growers trust the AI with it. They also maintain redundant sensor systems as a safety net. The model of AI authority with layered human oversight is already operating in some industries; enterprise software isn't necessarily last to get there.

The Future of the Developer

The framing the group explicitly rejected: 10x faster development means 10x fewer developers.

The Jevons Paradox came up by name. When a technology reduces the cost of producing something, historical consumption of that thing tends to increase. Higher-level programming languages, cloud infrastructure, low-code tools — none of them reduced developer headcount. They expanded what got built. AI-assisted development is likely to follow the same pattern: more software, in more places, by more people, including many who would not previously have been considered developers.

The composition of who does the work will shift, though. Senior developers who can define intent, evaluate architecture, and review AI output at a system level become more important. Junior developers — whose path to senior historically involved the slow, difficult experience of writing and debugging code — face a less clear route to building that foundation. The pipeline that produces senior developers needs a different design now.

One additional observation: the cloud engineering analogy is relevant. When cloud infrastructure emerged, a generation of engineers stopped needing to understand Linux networking. Most didn't, and it was broadly fine. Whether a generation of AI-era engineers will similarly bypass foundational layers — and at what cost — is a question the room couldn't answer.

What This Conversation Tells Us

The theoretical debate about whether AI is capable barely surfaced. The questions were about governance structures, production access, how to manage an expanding population of agents nobody has full oversight of, and what organisations look like when the software development moat disappears. Those are harder problems than the ones that dominated these conversations a year ago, and they're what re:cinq builds these events to work through.

re:cinq runs senior leaders roundtables regularly across Western Europe — curated, peer-level conversations for people working through AI transformation at an executive level. If you're navigating these questions and want to be considered for the next one, reach out at re-cinq.com.

Table of Contents

Who Was in the Room

What Does "Agent" Actually Mean?

Knowing When AI Accelerates You and When It Doesn't

The Citizen Developer Question

Organizational Adoption: The Range

Production Access and Trust

The Future of the Developer

What This Conversation Tells Us

Continue Exploring

You Might Also Like

A Pattern Language for Transformation

Browse our interactive library of 119 transformation patterns. Each one describes a specific architectural problem and a tested way to solve it, so your team can talk about real tradeoffs instead of abstract ideas.