Why Your Engineers Are Resisting AI — And What To Do About It

By re:cinq

Engineering leaders working through AI adoption describe a pattern that tends to repeat: training runs, developers understand the tools, and adoption still doesn't move. Another tool gets deployed. More training is scheduled. The numbers stay flat.

When the explanation eventually arrives, it's usually "our engineers are resistant to change" — which is frustrating because it's both probably true and not particularly useful.

What makes it difficult is that resistance in an engineering organisation isn't one thing. It shows up differently depending on where it's coming from, and the interventions that work for one type don't work for another. Diagnosing which form you're dealing with is the first step.

Our Head of Product Daniel Jones spoke at the "AI for the Rest of Us" meetup in London in February (Meetup #13, February 19, 2026), and the panel discussion that followed — with Norberto Lopes, VP Engineering at incident.io, and Corey Leigh Latislaw, Head of Engineering at JustEat Takeaway — mapped out the pattern across several organisations. What came out of it was a clearer picture of what's actually happening and where different responses make sense.

Three resistance profiles — from one person

Corey's trajectory across three organisations is worth describing in detail, because it illustrates how differently the same underlying problem can manifest.

At a consulting firm where she was Director of Engineering, the business was resistant and the engineers were enthusiastic. The actual blocker wasn't the engineering team at all — it was legal, IT, and compliance. Before engineers could experiment with AI tools in any meaningful way, the organisation needed sandboxed environments that met its security and data requirements. That meant a lot of conversations with functions that had nothing to do with engineering. Once those environments existed, adoption moved fast: 50 projects in nine months.

At Trainline, the picture was different. GitHub Copilot had been deployed and quietly dropped. There was no active resistance from engineers, no stakeholder opposition — just stagnation. Nobody was pushing back. Nobody was pushing forward either. The tool was there. People weren't using it. The organisation hadn't created the conditions for anyone to understand why they should.

At JustEat Takeaway, the approach was different from the start. Adoption was a stated goal for the whole engineering department. A platform team ran what they call a "dev rail" — creating structured environments and running regular code-along sessions (COTAs) so developers could engage with the tooling in a supported, low-pressure way. The framing was consistently one of enabling rather than mandating.

Three organisations, three different failure modes. The consultancy had a governance problem. Trainline had a motivation problem. JustEat solved for both from the beginning.

The identity layer

Beneath the organisational patterns, there's a psychological dynamic that applies specifically to agentic coding and that gets less attention than it deserves.

Experienced developers have built their professional credibility around something specific: the ability to understand a system deeply, write precise and deliberate code, and be accountable for what they produce. Agentic coding asks them to work differently — to express intent and evaluate output rather than construct solutions manually, to work with a tool that produces different results from the same prompt on different runs.

For engineers whose professional identity is built around technical precision, that shift involves letting go of habits that have been professionally valuable. This tends not to show up as a stated objection. It shows up as low-level disengagement — sitting through training without engaging, going back to existing workflows when sessions end, finding reasons why the tools don't quite fit the work.

This is why you can deliver a technically sound training, have developers walk out understanding the tools, and still see adoption rates barely change. Understanding the tools and being ready to work differently are different conditions, and training addresses only one of them.

Accountability drift

Norberto raised a form of resistance that's less visible than the others and shows up after adoption has nominally started: engineers using the tools, but disengaging from ownership of what the tools produce.

The pattern looks like this. A developer uses an agentic coding tool to produce a pull request. The PR goes up. When a reviewer pushes back on something, the response is "the AI wrote that" — implicitly or explicitly placing the accountability on the tool rather than the developer. The code gets through. Standards drift. The review process starts functioning differently because the social contract around ownership has changed.

Norberto's framing for addressing this: the AI cannot go on the chopping block. Whatever the tooling contributes, the developer owns the output. He used an analogy from voice messaging — when you send a voice note, you put all the work on the listener; sending a poorly structured AI-generated PR is the same move. The developer's job hasn't changed in terms of accountability, only in terms of how the code is produced.

Getting this framing established early, before adoption scales, is easier than retrofitting it after the review culture has already shifted.

What the difference is between forcing and enabling

Corey's contrast between the JustEat approach and what she'd seen elsewhere is worth naming directly: the JustEat model was designed to inspire and enable, not to mandate and measure.

The distinction matters because developers who've been mandated to adopt a tool respond differently than developers who've been given the support to explore it. The mandate approach tends to produce compliance without engagement — developers who can demonstrate the tool in a review and don't use it in their actual work. The enablement approach is slower to set up and requires a functioning platform team, but it produces practitioners rather than attendees.

What this means for where to start

The starting point depends on which type of resistance is actually present.

If the blocker is governance — if engineers can't safely experiment because the legal and compliance infrastructure doesn't exist — the work is with IT, legal, and security, not the engineering team. Training before that infrastructure is in place is wasted.

If the problem is stagnation — no active resistance, no active adoption — the question is whether people understand why this matters to them specifically. Demonstrations and mandates both tend to fail here. What tends to work is structured exposure in a supported context: exactly what the COTA model at JustEat provides.

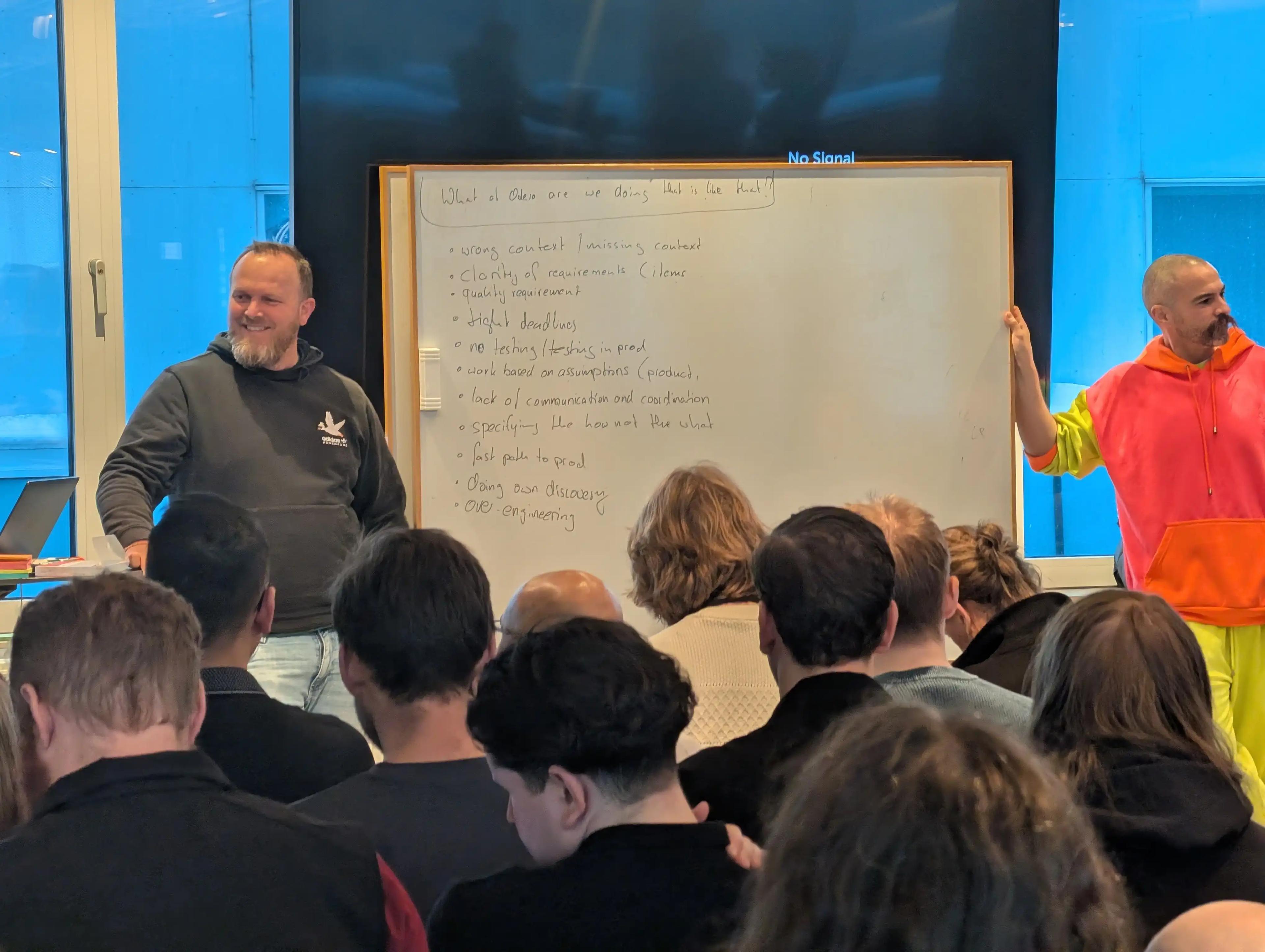

If the resistance is identity-level — engineers who understand the tools and still won't use them — addressing it requires the kind of receptiveness work we've described from the Odevo engagement: CTO alignment that connects to a business case, shared context across teams, and structured space to surface and work through concerns before training begins.

And if accountability drift is the concern — or a likely future one as adoption scales — establishing the ownership framing early is significantly easier than reestablishing it later.

The organisations that move through AI adoption most effectively tend to have someone whose job it is to think about which of these is actually the problem. Most don't. They deploy a tool, run a training, and wonder why it didn't work.

Table of Contents

Three resistance profiles — from one person

The identity layer

Accountability drift

What the difference is between forcing and enabling

What this means for where to start

Continue Exploring

You Might Also Like

A Pattern Language for Transformation

Browse our interactive library of 119 transformation patterns. Each one describes a specific architectural problem and a tested way to solve it, so your team can talk about real tradeoffs instead of abstract ideas.