How We Moved 100 Developers to Agentic Coding in Six Weeks

By re:cinq

Odevo came to us with 100+ developers who needed to shift to agentic coding, a technology estate spanning four programming languages accumulated through years of acquisitions, and — across much of the engineering organisation — significant reluctance to change how they worked.

That reluctance was the part of the engagement we hadn't fully anticipated. It was also the reason why the approach we used mattered as much as it did.

Our Head of Product Daniel Jones spoke about this at the "AI for the Rest of Us" meetup in London in February (Meetup #13, February 19, 2026). This post draws on that talk.

The starting point

Odevo is a major Swedish real estate management software company, roughly $3B in revenue. Growth through acquisitions had left the engineering organisation with PHP, Java, .NET, and JavaScript running in parallel across teams that had, until recently, been separate companies.

The goal was to get ahead of industry change before competitors did. At that scale and with that level of technical diversity, leaving developers to discover and adopt agentic tools on their own would not produce a coherent capability. They needed a structured approach — one that would leave the engineering organisation operating differently, rather than just having been exposed to something new.

They also had 18 months of stalled projects in the backlog.

Discovery first

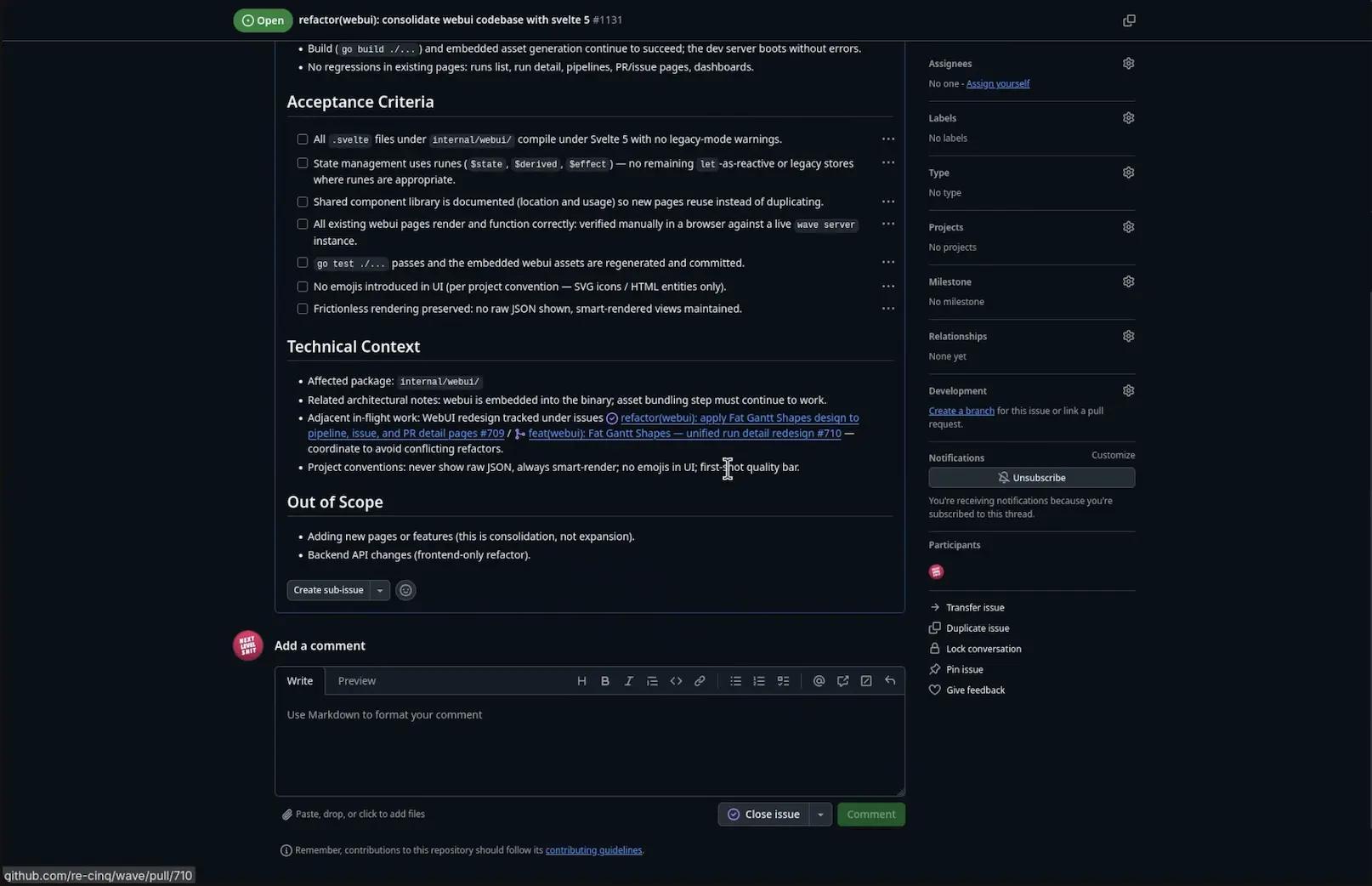

Before designing any training, we ran a structured assessment. We analysed Jira stories and CI/CD pipelines, mapped value streams, and assessed teams against a consistent set of maturity metrics: test coverage, batch size, version control fluency, deployment frequency, and observability.

The goal was to understand where work was getting stuck and which teams were positioned to move quickly. Odevo's engineering organisation had significantly different levels of technical maturity across teams, shaped partly by acquisition history. Going in with a uniform training rollout would have moved too fast for teams that needed different groundwork and too slowly for those that were ready to go.

The assessment shaped what we built.

Building receptiveness before training began

Most AI training rollouts skip this phase. It is the hardest to schedule, the slowest to show results, and — in our experience across engagements — the most consequential.

Experienced developers have spent years building professional credibility around something specific: the ability to understand a system deeply, write precise code, and be accountable for what they produce. Agentic coding asks them to work differently. To express intent and evaluate output rather than construct solutions manually. To work with a tool that produces different results from the same prompt on different runs. For engineers whose professional identity is built around technical precision, that shift involves letting go of habits that have been professionally valuable.

This tends not to show up as an explicit objection. It shows up as low-level disengagement — sitting through training without engaging, going back to existing workflows when sessions end, finding reasons why the tools don't fit the work.

We addressed this before training began. Odevo's CTO made the strategic direction clear and public — this was a company priority, connected to a business case, not an optional experiment. We ran an internal conference to create shared context across the engineering organisation, making the shift visible as something happening across the whole org rather than to individual teams in isolation. And we facilitated liberating structures workshops: formats designed to surface real concerns and build genuine consensus, rather than manage resistance away with positive framing.

The objective was to have developers ready to engage with the training before it started.

Six weeks of structured enablement

The curriculum ran for six weeks with weekly sessions. The pacing was deliberate — spaced repetition is more effective than a concentrated course, and with tools that behave non-deterministically, developers need time between sessions to apply what they've learned to actual work.

We built significant time around how models fail before teaching what they do well. Hallucinations cluster at the "sweet spot" of specificity — where a request is similar enough to training data to generate a confident-sounding response, but distinct enough that the response is wrong. Context pollution happens when accumulated conversation history starts distorting outputs. Running too many active MCP servers simultaneously degrades performance in ways that aren't obvious until something breaks in production.

Developers who came into training already understanding these failure modes were more effective than those who'd only seen the tools performing well. Knowing where and why something breaks produces calibrated confidence — the kind that holds up when the tool is applied to real work under real conditions, rather than a controlled demonstration.

Each session included interactive elements: drawing workflow memory diagrams, testing tools against real items from their own backlogs. Takeaway tasks between sessions kept what developers had learned in contact with actual work.

What happened

Agent usage across the organisation increased by 500% over the six weeks. Teams shipped projects that had been stalled for 18 months. Critical production bugs were resolved through agentic workflows.

One developer built a mobile app in three days — a project that had been blocked for a year and a half. It's now live in both stores.

Tomasz Maj, Head of Product Ops & Development at Odevo, described the outcome directly: teams that came in sceptical became active adopters. The reluctance that typically slows early adoption — what he called the "scared curve" — flattened across the engineering department.

What produced those results

The results came from technical enablement and the organisational conditions that allowed it to land, working together. Getting a large engineering organisation to use agentic tools at production quality requires structured work on both sides.

Starting with discovery, spending dedicated time on building receptiveness before training begins, and structuring the curriculum around how models fail before how they succeed — that sequence takes longer upfront than going straight to a training programme. At Odevo, the outcomes were worth that investment.

Daniel's full talk is available through the "AI for the Rest of Us" meetup community and covers the methodology in more depth.

Table of Contents

The starting point

Discovery first

Building receptiveness before training began

Six weeks of structured enablement

What happened

What produced those results

Continue Exploring

You Might Also Like

A Pattern Language for Transformation

Browse our interactive library of 119 transformation patterns. Each one describes a specific architectural problem and a tested way to solve it, so your team can talk about real tradeoffs instead of abstract ideas.