Engineering Velocity Is Unlocked. Now What?

By re:cinq

Most of the conversation around AI adoption in engineering organisations focuses on the path to adoption: how to get engineers using the tools, what training works, how to manage the resistance. That conversation ends when adoption succeeds.

What happens after that doesn't get much attention. In our experience, it's where the harder questions start.

Our Head of Product Daniel Jones and the expert panel at AI for the Rest of Us — a monthly London meetup bringing together engineering leaders navigating AI adoption — spent significant time on this. Norberto Lopes, VP Engineering at incident.io, and Corey Leigh Latislaw, Head of Engineering at JustEat Takeaway, joined the panel. Adoption gets treated as the finish line. In practice, it's where a new set of problems becomes visible.

Velocity reveals what was already broken

Norberto described the core principle clearly: AI amplifies existing engineering practices. Fast feedback loops get faster. Slow ones become more visibly broken.

If your deployment process is efficient, higher development velocity makes it more efficient still. If your product prioritisation process is slow, the same velocity increase floods it. If your code review culture is healthy, agentic coding accelerates throughput. If it isn't, the problems that were manageable at lower velocity become unmanageable at higher velocity.

This means that for most organisations, the velocity unlock doesn't produce a uniform improvement across the delivery process. It produces a shift in where the constraint is. The question is whether you've prepared for that shift — or whether you find out where the new bottleneck is by backing up against it.

The Fruition case

Elliot Beattie described what this looked like in practice at Fruition: a 250% increase in engineering velocity. The engineering team was moving faster than it ever had.

The product team was caught on the back foot for three to four months. QA couldn't keep pace and required significant hiring to catch up. The development stage had stopped being the slowest part of the delivery process, and every other function in the pipeline was suddenly exposed.

This is not a failure story. Fruition worked through it. But the disruption was real, and it came directly from the thing that had gone right.

The team ratio problem

The old staffing model for software engineering organisations — roughly one product manager for every eight developers — was calibrated for a world where developers were the rate-limiting factor. When they stop being the rate-limiting factor, the ratio breaks.

Engineering leaders running agentic adoption programmes are finding that developers can't be kept productively busy under the old model. There isn't enough product direction, design input, or prioritised work to absorb the capacity that becomes available. The assumption that the development stage would always be where work backed up no longer holds.

The organisations working through this aren't adding more developers. Several are restructuring around smaller, blended teams — a product engineer with direct customer access, working alongside a designer or product manager, able to move from customer input to shipped feature without the handoff overhead that larger team structures require. The unit of delivery is changing shape.

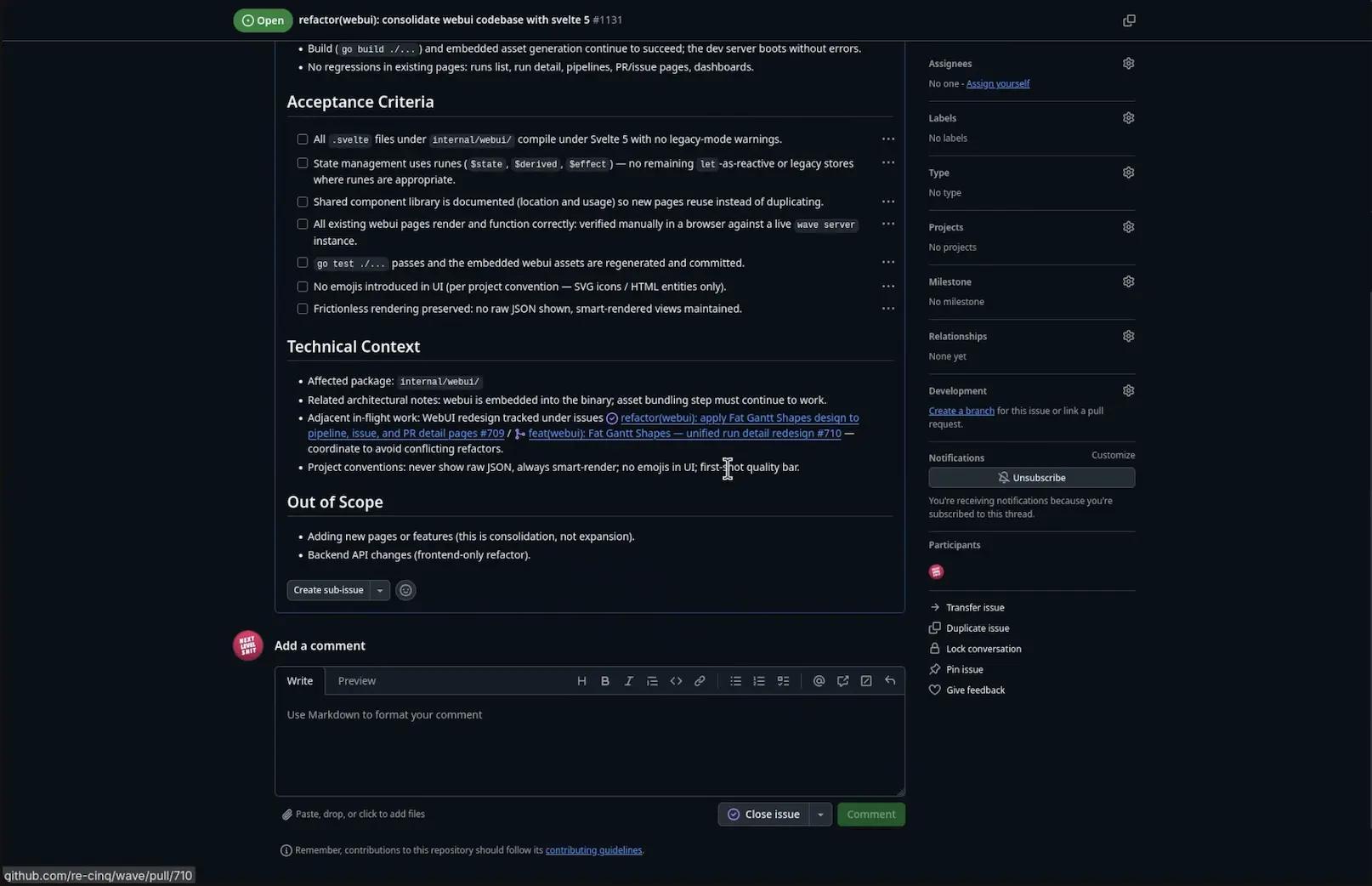

Where code review breaks down

One specific bottleneck Norberto highlighted deserves attention because it's not immediately obvious and it compounds quickly.

As agentic coding increases the volume of code being produced, code review becomes a more significant part of each developer's day. That's manageable at first. What makes it unmanageable is when the social contract around ownership starts to erode.

When developers are accountable for code they constructed manually, the review process carries an implicit expectation: the author has already thought through the code and is asking for feedback. When AI-generated code enters the review queue without the same level of authorial engagement, the reviewer is doing work that the author should have done. The reviewer is on the receiving end of a voice note, to use Norberto's analogy — someone has put all the load on the listener rather than taking the time to communicate clearly.

This dynamic can shift review culture in ways that are hard to reverse. Establishing clear ownership expectations early — the AI produces code, the developer owns it — is significantly easier than re-establishing them once the pattern has formed.

Mapping the full delivery lifecycle

Corey described what she's doing at JustEat Takeaway in response to this: mapping the entire software delivery lifecycle, not just the development portion, before the velocity increase arrives in full.

The development stage is no longer the only place to look. Design, product prioritisation, QA, code review, deployment, and customer feedback loops are all candidates for where the new constraint will form. Until you've mapped them, you don't know which one will slow first.

This is practical work that most organisations defer because the development bottleneck has always been more visible. When it's removed, the deferred mapping becomes urgent. Doing it in advance changes what you're managing from a crisis to a transition.

The platform function becomes more important, not less

A structural implication that came up in both the JustEat Takeaway and incident.io examples: as the team composition shifts and delivery velocity increases, the platform team's role doesn't shrink. It expands.

Someone has to maintain the compliant environments in which agentic coding happens. Someone has to keep tooling current as the models and the integrations evolve. Someone has to run the ongoing enablement — the code-along sessions, the standards, the patterns — that keeps adoption from fragmenting into inconsistent practices across teams.

The platform function that enables agentic coding is not a set-it-and-forget-it infrastructure investment. It's an ongoing operational capability. Organisations that treat it as one-time setup tend to find that adoption quality degrades over time.

Daniel described a complementary pattern: forward-deployed engineers with direct engineer-to-customer contact, embedded close enough to end users to close the feedback loop that blended teams need to move fast. The combination — a capable platform team and forward-deployed engineers — is emerging as the structural model that supports sustained velocity, not just the initial unlock.

The question worth asking now

If your engineering organisation is in the middle of an AI adoption programme, or approaching the point where adoption is beginning to take hold, the question worth asking is: where will the velocity go?

Not which metrics will improve in the development stage — those are the easy ones. Where will the capacity back up? Which functions have been sized for a world where development was the constraint? What changes in team structure, delivery process, and organisational capacity need to happen before the velocity arrives, rather than in response to it?

Most organisations find out the answers by running into the problems. The ones that think through them in advance have a noticeably different experience of the transition.

These are the kinds of second-order questions we wrote the book on — literally. Download the free AI-Native Engineering guide to see how engineering organisations are restructuring around AI adoption, not just deploying tools.

Table of Contents

Velocity reveals what was already broken

The Fruition case

The team ratio problem

Where code review breaks down

Mapping the full delivery lifecycle

The platform function becomes more important, not less

The question worth asking now

Continue Exploring

You Might Also Like

A Pattern Language for Transformation

Browse our interactive library of 119 transformation patterns. Each one describes a specific architectural problem and a tested way to solve it, so your team can talk about real tradeoffs instead of abstract ideas.