Simple Tools, Smarter Agents

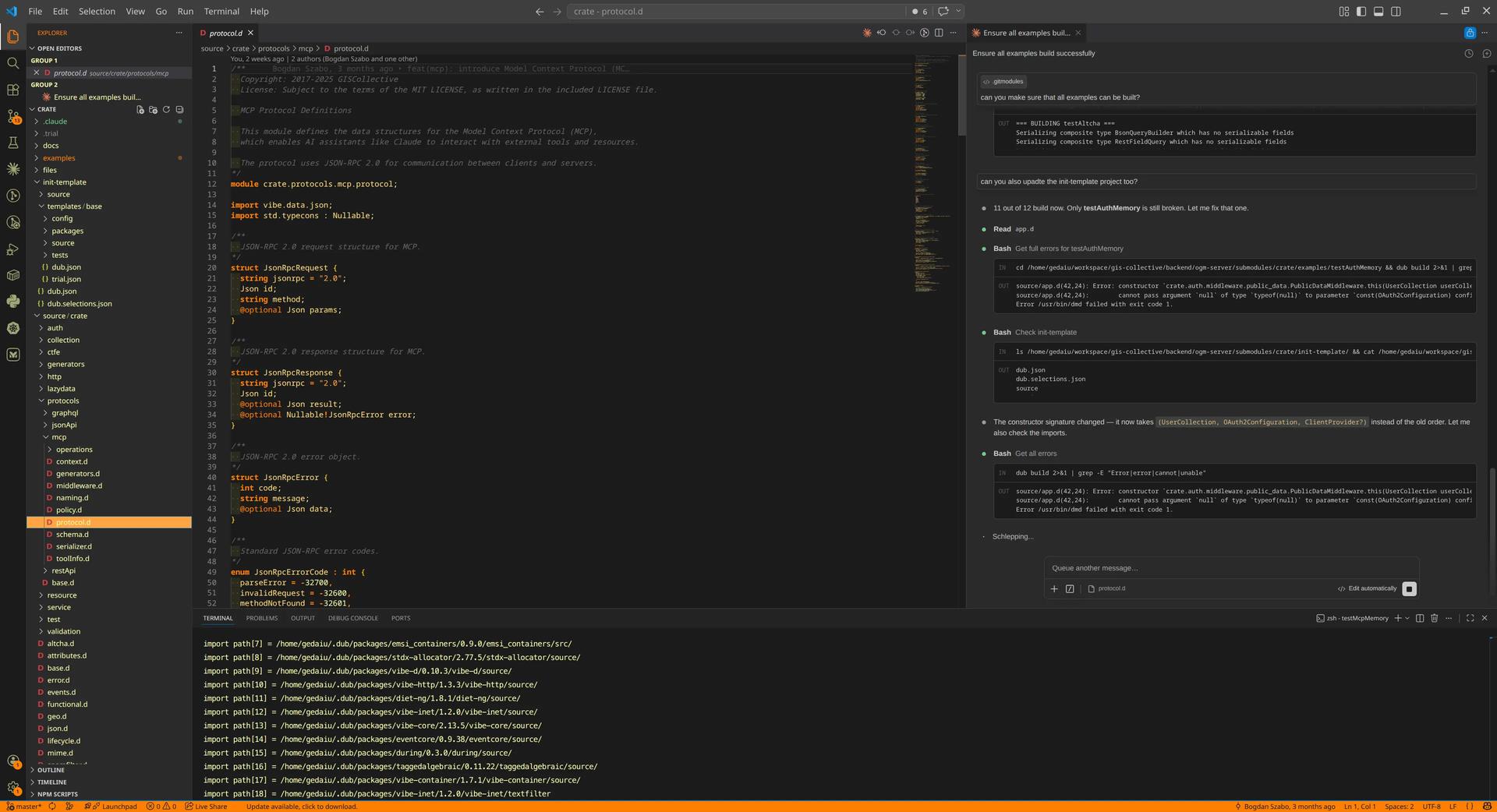

We've all been there: you're building a library, and you find yourself applying the same "code recipes" over and over again. To solve this in my D language library, I built an automated API generator. It looks at your data structures and automatically builds the interface. It's efficient, it's clean, and it works perfectly for REST.

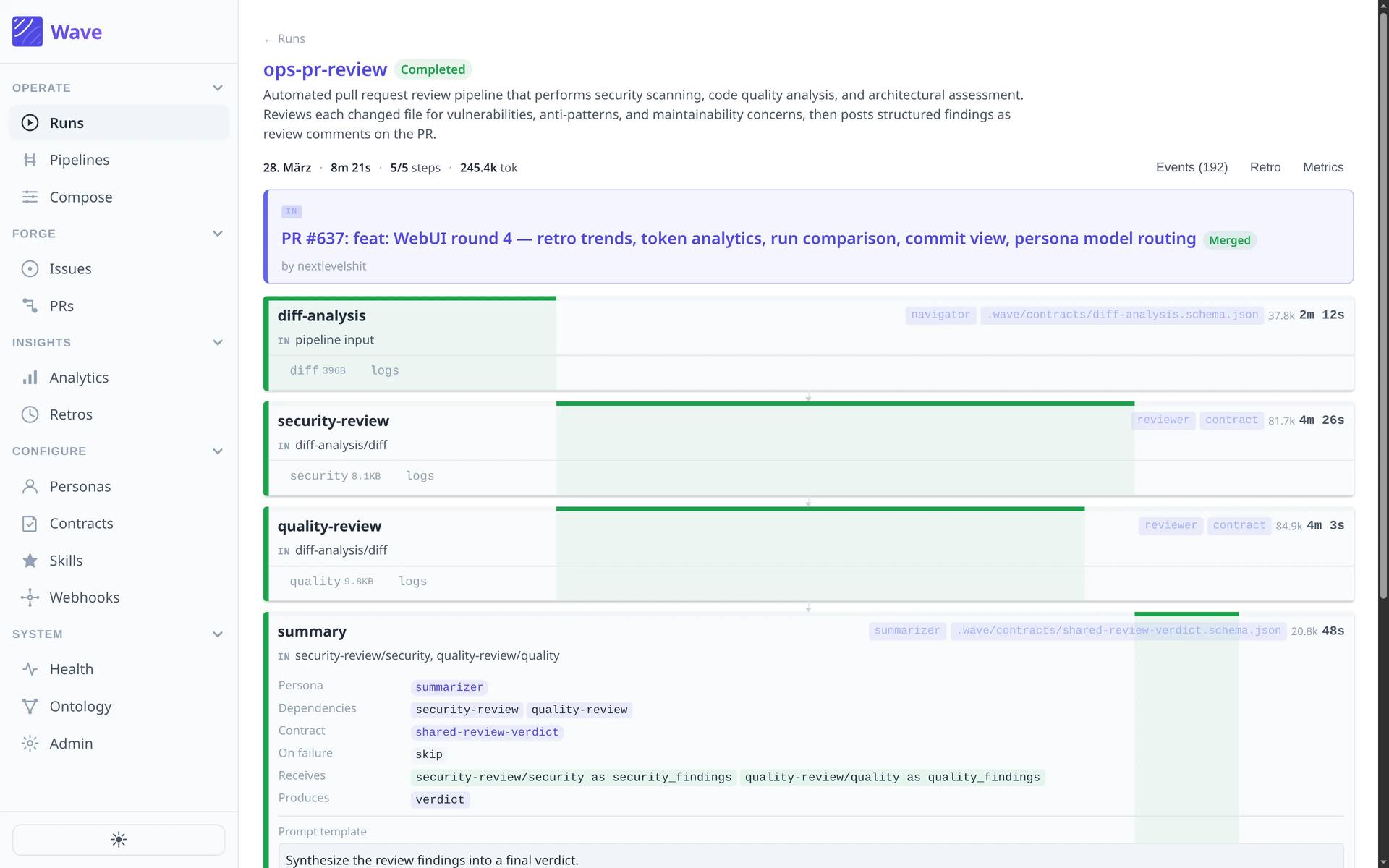

Then I decided to give my library a new superpower: a Model Context Protocol (MCP) generator.

The goal was simple: the same seamless automation I had with REST, but for an AI agent. I quickly learned that what makes a "good" REST API makes for a "terrible" MCP server.

The Naive Mistake: Mapping REST to AI

My first implementation was what I call "The REST Mirror." If an application had 25 data models, the library would generate 25 sets of tools. Each model got its own get, list, create, update, replace, and delete operation.

Essentially, I was handing the AI a 150-page manual on how to talk to my database. Here is what just one of those tools (get_organization) looked like:

{

"name": "get_organization",

"description": "Get a single organization by ID",

"inputSchema": {

"type": "object",

"properties": {

"name": { "type": "string" },

"subscription": {

"type": "object",

"properties": {

"invoices": {

"type": "object",

"properties": {

"details": { "type": "string" },

"hours": { "type": "number" },

"date": { "type": "string", "format": "date-time" }

}

},

"monthlySupportHours": { "type": "number" }

// ... and so on for 50+ more lines

}

},

"settings": {

"type": "object",

"properties": {

"region": { "type": "string" },

"timezone": { "type": "string" }

// ... dozens of configuration fields

}

}

}

}

}

This single tool definition — with all its nested properties for subscriptions, invoices, and complex settings — clocked in at nearly 1,200 tokens. Multiplied across 150 tools, the conversation was dead before it even started. I had built a giant library but gave the librarian no room to stand.

Context Is the New Embedded Memory

While browsing the MCP subreddit, I stumbled onto two discussions that changed my perspective: one analysing 78,000+ tool descriptions, and another measuring MCP vs CLI token costs.

They made me realise why tool size matters so much. The AI context window is like memory in an embedded system. It is precious and finite. In a standard app, your "code" (the MCP tool definitions) and your "application state" (the conversation history) share the exact same narrow hallway.

If your tools are too loud and take up too much space, your agent loses its short-term memory. It starts forgetting the user's instructions just to remember the schema for a configuration field it might never use. Worse, as the conversation grows, the tools can be pushed out of the active context entirely, leaving the agent unable to perform the tasks it was built for.

The Pivot: From Static to Dynamic Tools

I needed to stop thinking about "endpoints" and start thinking about "capabilities." To save my library, I had to find every instance of duplication and cut it out.

Consolidating the Low-Hanging Fruit

The first realisation was that delete_user, delete_project, and delete_task are all doing the same thing. They just need an ID.

I collapsed dozens of specific tools into one: delete_record(model, id). The only trick was telling the LLM which models were available. I added the list of valid models to the examples field in the JSON schema.

{

"name": "delete_record",

"description": "Delete a record by ID.",

"inputSchema": {

"type": "object",

"properties": {

"model": {

"type": "string",

"description": "The model name",

"examples": ["user", "project", "task", "comment", "file"]

},

"id": {

"type": "string",

"description": "ID of the record to delete"

}

},

"required": ["model", "id"]

}

}

Just like that, 25 tools became 1. I repeated this for get_record, and my 50 most verbose tools collapsed into 2.

The Lazy Loading Schema Trick

The create and update tools were the real token-killers. A generic create_record wouldn't work on its own because the AI needs a well-structured JSON schema to know how to send the data; otherwise it would just guess and fail.

I decided to treat the LLM like a developer. When you don't know an API, you look at the documentation. So I created a tool called get_schema(model).

{

"name": "get_schema",

"description": "Get the JSON Schema for a model, including field types and descriptions. Use this before create_record or update_record.",

"inputSchema": {

"properties": {

"model": {

"type": "string",

"description": "The model name to get the schema for",

"examples": ["user", "project", "task"]

}

},

"required": ["model"]

}

}

Now create_record is generic. By adding a hint to the AI to call get_schema first, I saved thousands of tokens. I extended the same idea to update_record by adding an id field. What was once 50 tools (25 full replaces and 25 partial updates) became 2.

Solving the List Query Problem

The final hurdle was the list tools. Every model has different query options — some filtered by date, others by status or tags. Following the same pattern, I introduced get_list_query_options(model).

This didn't just reduce 25 tools to 1; it removed the "static" query specification. The AI only pulls the filtering logic into its context when it actually needs to perform a search.

The Result: Logarithmic Growth

By the time I was done, my 150-tool monstrosity had shrunk to 8 highly efficient tools. The impact on the context window was staggering.

| Metric | Naive Approach (REST Mirror) | Optimised "Simple" Library |

|---|---|---|

| Tool count | 150 tools | 8 tools |

| Total base tokens | ~75,000 tokens | ~1,500 tokens |

| Context savings | 0% | 98% |

| Scalability | Linear (bad) | Logarithmic (excellent) |

This design doesn't grow linearly. If I add 100 more data models to my D language library, the base tax on the conversation barely budges. I just update the examples list. Complexity now grows logarithmically rather than linearly.

Final Thoughts: Easy Is Not Simple

Building this taught me a lesson that goes beyond code. In software, we often confuse easy with simple.

It is easy to click "export" on a REST API and wrap it in an MCP server. It's familiar, and many frameworks encourage it. But as Rich Hickey famously argued in Simple Made Easy, easy is just about being "near to hand." It doesn't mean the system is simple. That easy path created a tangled mess of 75,000 tokens that suffocated the AI's ability to reason.

With classical APIs, it's essentially free to have thousands of routes. With MCP, the size of your API is your most expensive cost.

As we move into AI-native development, we have to shift our mindset. We aren't just building plumbing for data; we are building environments for reasoning. If we want our agents to be brilliant, we have to stop giving them easy APIs that are heavy and bloated. We need to give them simple tools — dynamic, lightweight, and respectful of their focus.

In the end, the best gift you can give an AI isn't more features. It's the room to actually think.

If this way of thinking resonates, it's the same design philosophy we unpack in From Cloud Native to AI Native — 174 patterns for building systems that work with AI instead of around it. Normally $26.99, free here.

Table of Contents

The Naive Mistake: Mapping REST to AI

Context Is the New Embedded Memory

The Pivot: From Static to Dynamic Tools

Consolidating the Low-Hanging Fruit

The Lazy Loading Schema Trick

Solving the List Query Problem

The Result: Logarithmic Growth

Final Thoughts: Easy Is Not Simple

Continue Exploring

You Might Also Like

A Pattern Language for Transformation

Browse our interactive library of 119 transformation patterns. Each one describes a specific architectural problem and a tested way to solve it, so your team can talk about real tradeoffs instead of abstract ideas.