Building Software Factories: The Blueprint for AI-Native Delivery

By Michael Mueller

A follow-up to Your Engineering Org Is a Prompt Now and You Can't Transform What You Can't Read

The previous posts made the case that your engineering organisation is now an instruction set, and that you need an honest vocabulary to read it before you can rewrite it. The question we keep getting is: what does the rewrite actually look like?

The answer is a software factory. A system where agents produce the code and humans design the system those agents operate within.

This post is the blueprint. What a software factory is, what it requires, how to build one, and how to roll it out in a large organisation without betting the company.

Why most enterprise AI pilots fail

Enterprise AI spending is massive. But the failure rate tells the real story: by most estimates, the vast majority of enterprise AI pilots never reach production. The numbers vary by study and the methodology is often questionable, but the pattern is consistent across every serious analysis.

The models are good enough. The problem is that organisations treat AI as a tool upgrade rather than an operating model change. They hand developers a coding assistant, measure lines-of-code-per-hour, declare a productivity gain, and move on. The org structure stays the same. The processes stay the same. The coordination overhead stays the same. The gain is real but marginal, and it never compounds because the system around it was designed for humans writing code by hand.

A software factory doesn't bolt agents onto your existing process. It replaces the process.

What a software factory actually is

In Your Engineering Org Is a Prompt Now, I introduced the Agent Factory concept: to AI-native development what an Internal Developer Platform is to Cloud Native development. A central team builds, curates, and maintains the agents, prompt libraries, validation pipelines, and orchestration patterns that capability units consume to ship software. A robot factory makes robots; an agentic factory makes things using robots. A software factory makes software using agents.

That was the thirty-second version. Here is the full picture.

A software factory is a system with six core capabilities:

1. Orchestration

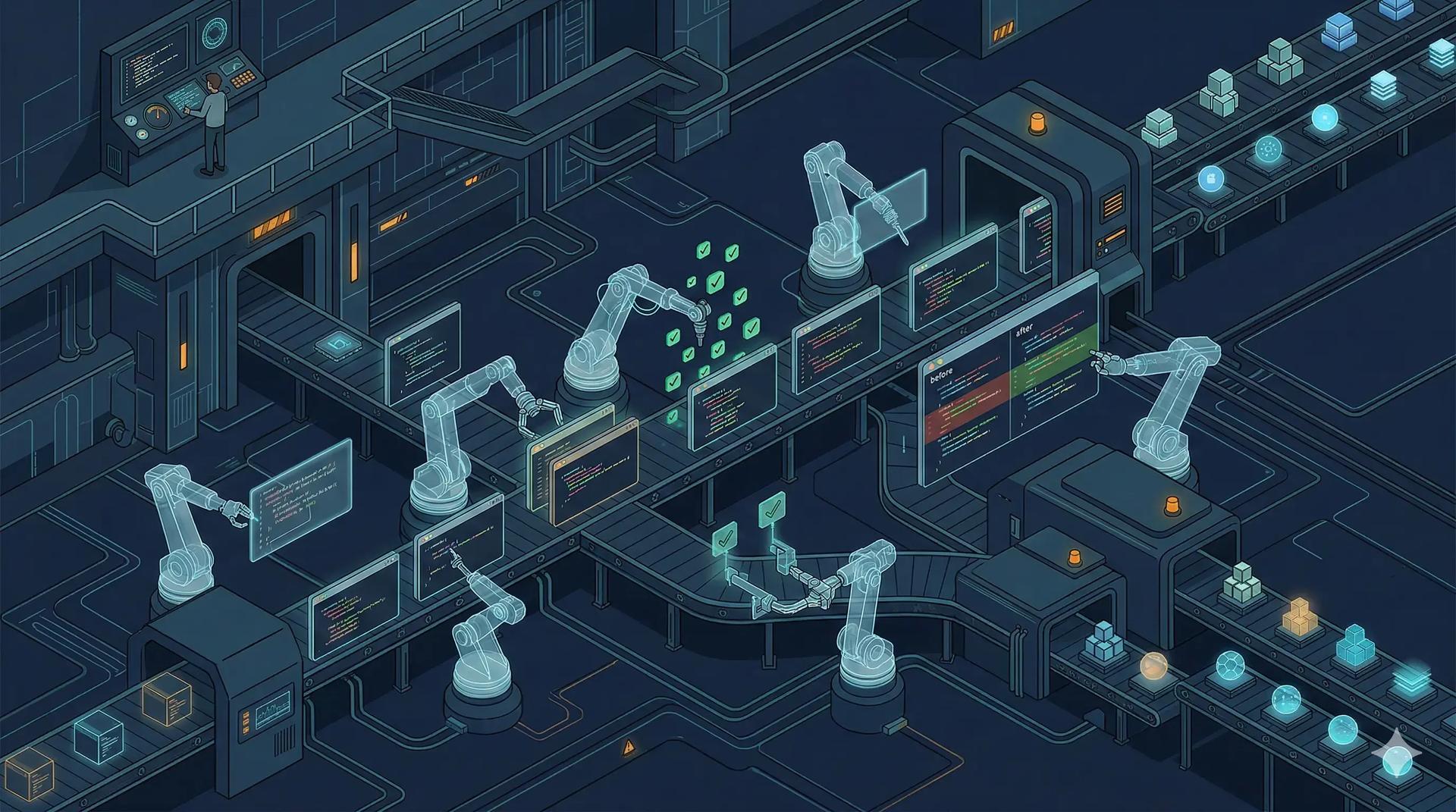

Who coordinates fifty agents working on the same codebase? You need a coordination layer that can decompose work, assign it to specialised agents, manage dependencies between tasks, and reassemble the output. This is the difference between "developer using an AI assistant" and "system that produces software."

The orchestration layer determines whether your factory is "dark" or "lights-on." In a dark factory, no humans write or read code. The most extreme implementations, like OpenAI's Harness Engineering or StrongDM's software factory, delegate everything to agents. In a lights-on factory, like Stripe's Minions, agents generate the majority of code and humans focus on review. Stripe ships over 1,300 AI-generated pull requests per week on this model.

Which variant is right depends on your team's maturity, your backlog quality, and your appetite for risk. Both are viable. Both require the same underlying infrastructure.

Right now, every implementation converges on the pull request as the atomic unit of agent work. Stripe's Minions, Ramp's Inspect, OpenAI's Harness, and Steve Yegge's Wasteland (which federates agent work across organisations using Git's fork/merge model on Dolt) all landed on the same protocol independently. The PR works because it's already integrated into CI, review, and deployment. But it's probably not the end state. As validation pipelines mature and trust in agent output increases, the PR becomes overhead. The trunk-based development crowd would argue that agents should be sharing individual commits to mainline immediately, with validation happening continuously rather than at the PR boundary. They're probably right. For now, PRs are the pragmatic starting point.

2. Isolated environments

This is the pattern that is emerging as non-negotiable, and it is the one that most organisations building software factories independently converge on.

Stripe built Minions. Ramp built Inspect. Different companies, different codebases. Same architecture: cloud-based isolated environments where each agent gets its own sandbox. Spin up in seconds, run tests, verify the change, open a PR, tear down. No shared state. No port conflicts. No waiting.

Ramp hit 30% of all PRs written by their agent. Neither could do this on localhost.

There's a useful distinction between agents running background tasks (multiple terminals, git worktrees, maybe a Mac Mini) and actual background agents: infrastructure with event-driven triggers, isolated compute, and a governance layer. The first is you running a few agents on your laptop. The second is a system that remediates CVEs within hours of disclosure, updates dependencies across hundreds of repos, or migrates CI pipelines at scale, all without a human typing a prompt.

But the operational tasks are just the warm-up. The real point of a software factory is executing properly defined development tasks from a backlog: features, user stories, bug fixes. That requires the backlog discipline mentioned in the discovery phase below. If your tickets are vague enough that a human engineer would need to ask three clarifying questions before starting, an agent will silently build the wrong thing. The quality of your specifications becomes the bottleneck, which is why revolution and evolution have to run together.

You need to decouple agents from engineer workstations. This will become standard infrastructure within a year, the same way CI/CD did.

We are building Assembly Line as our approach to agentic coding workflows (WIP).

3. Context and memory

Agent output quality is directly proportional to context quality. I wrote about this in the previous posts and it remains the most underinvested capability in every organisation we work with.

A software factory needs structured, versioned, machine-readable context: architecture decision records, API schemas, domain models, coding conventions, test strategies. The wiki that was last updated in 2023 doesn't count. Neither does the tribal knowledge that lives in Slack threads.

Beyond per-session context, agents need persistent memory. What did the agent learn from the last hundred pull requests in this repository? What patterns consistently fail code review? What architectural decisions were made and why? Without memory, every agent session starts from zero. With it, the system accumulates institutional knowledge that compounds over time.

4. Security and governance

When agents are generating and shipping code, you need a classification system: what auto-ships through the validation pipeline, what requires human review, and what is a hard stop.

Documentation updates and test additions? Auto-ship. New API endpoints and schema changes? One human reviewer. Authentication changes, payment flows, or anything touching personal data? Validation architect and domain expert sign-off. No exceptions.

This needs to be enforced through linters, structural tests, and CI gates, not through review checklists that people ignore under deadline pressure. One underappreciated mechanism: signed commits. If only human-authored commits carry GPG signatures, you get a clear, cryptographic audit trail of which code a human actually approved versus what an agent produced autonomously. This distinction matters when something goes wrong.

We are building Shift Log to extend this further: AI coding agent conversations saved as Git Notes attached to commits, so every code change retains its reasoning in git history.

Yegge's Wasteland takes this further with multi-dimensional stamps on completed work: quality, reliability, creativity scored independently by validators, all traceable back to the specific evidence. Whether or not you adopt that model, the principle holds: agent-generated code needs a richer audit trail than a green CI badge.

5. Validation pipelines

The validation pipeline is what separates a software factory from a prompt-and-pray workflow. Every agent output passes through automated verification: tests, linting, type checking, security scanning, architectural fitness functions. If it passes, it ships. If it doesn't, it gets fed back to the agent with the failure context for another attempt.

StrongDM's software factory takes an approach borrowed from machine learning: holdback scenarios. End-to-end user stories are stored outside the codebase where the agents cannot see them, like a holdout set in model training. The agents write the code, and the holdback scenarios validate whether the result actually satisfies the user. This shifts validation from boolean ("the test suite is green") to probabilistic ("of all observed trajectories through all scenarios, what fraction satisfies the user?"). They pair this with a Digital Twin Universe: behavioural clones of Okta, Jira, Slack, and other services that let them run thousands of scenarios per hour without hitting production rate limits.

This is where the cloud-based isolated environments pay off. Each agent can run the full test suite in its own sandbox without interfering with other agents working on different changes. This is what makes the factory scale.

6. Learning and improvement

The system should get smarter over time, through better context engineering rather than fine-tuning (which is expensive and fragile). Track which patterns produce code that passes review. Track which architectural patterns the agents consistently get wrong. Feed those learnings back into the context layer.

Every interaction generates data unique to your organisation. Every pull request, every code review comment, every test failure becomes training signal for better prompt engineering and context curation. Organisations that start building this feedback loop now accumulate advantages that late movers cannot shortcut.

The architecture

These six capabilities compose into a layered architecture:

| Layer | Function | Example tooling |

|---|---|---|

| Orchestration | Decompose work, coordinate agents, manage dependencies | Wave, Claude Code teams, Claude Flow |

| Environment | Isolated sandboxes for parallel agent execution | Assembly Line, Codespaces, Devcontainers |

| Context | Structured knowledge: ADRs, schemas, conventions | AGENTS.md, spec-driven repos, in-repo documentation |

| Validation | Automated verification of agent output | CI/CD, structural tests, architectural fitness functions |

| Governance | Classification, traceability, audit | Shift Log, approval gates, permission scoping |

| Learning | Feedback loops from production to context | Prompt analytics, review signal aggregation |

Each layer builds on the ones below it. Try to orchestrate without isolated environments and you bottleneck immediately. Try to validate without structured context and you're chasing noise. Try to learn without governance capturing what happened and you're guessing.

Rolling it out: revolution and evolution

Here is where most organisations get it wrong. They try to transform everything at once, or they settle for incremental tool adoption that never compounds. Neither works.

What works is running two tracks in parallel: revolution and evolution.

Revolution: build the factory

Pick your highest-performing team. The one with the best backlog discipline, the clearest requirements, the most mature engineering practices. Send your best people, because what they build will change how the rest of the organisation works.

The discovery phase matters:

- Identify the right team. Discipline over enthusiasm. The team with the best product management practice: well-defined requirements, ability to communicate needs, and ability to validate that intended outcomes are being achieved.

- Value stream map their process. Where does work enter? How does it flow? Where are the handoffs, the queues, the wait states? This reveals what can be automated and what cannot.

- Determine dark or lights-on. The backlog quality determines this. If requirements are precise enough for agents to execute without human clarification, dark is viable. If not, lights-on is the right starting point.

Then deliver: two to three senior engineers work with the team over 12 weeks to build a software factory MVP that takes real backlog items and ships real code to production with agent-generated implementations.

Evolution: upskill the rest

While the factory team builds the future, the rest of the organisation needs to catch up to the present. Most developers are still at stages 2-4 on the AI coding adoption ladder: using a coding assistant with permissions on, maybe YOLO mode in an IDE. They need to get to stages 5-6: CLI-based, multi-agent, building confidence with agentic workflows.

A one-day workshop won't cut it. Lasting behaviour change requires:

- Spaced repetition. Weekly modules, not a firehose. Each session introduces one capability, practises it under supervision, and sets a challenge to apply it in real work.

- Real-world application. Contrived exercises build familiarity. Real challenges build competence. "Implement a feature from your backlog using an agentic workflow" beats "follow this tutorial" every time.

- Social learning. Cohorts with dedicated channels for ad-hoc support. Discussion of exercises and experiences in group settings. Verbal retracing of steps to improve recall.

- Train-the-trainers. Scale by teaching your people to teach. An intensive programme that equips internal champions to deliver the curriculum independently.

The revolution track produces the factory. The evolution track produces the people who can use it. Neither works without the other.

What "boring" gets right

Something counterintuitive from every software factory we have built or helped build: boring technologies produce better results than cutting-edge ones.

Composable APIs. Stable interfaces. Well-documented libraries with deep representation in training data. These produce predictable, high-quality agent output. The clever framework with the custom DSL? Agents struggle with it. The battle-tested, well-documented alternative? Agents nail it consistently.

This matters for technology choices. The evaluation criteria for your stack should now include "agent legibility" alongside traditional concerns. Can an agent read the documentation and produce correct code? If not, the technology is a liability in an agent-driven world, regardless of its other merits.

The talent question

There is a risk in the software factory model that nobody wants to talk about: what happens to junior engineers?

If your teams are three to four senior specification engineers working with agent fleets, where do juniors learn? You have eliminated the entry-level rung of the career ladder. Freeze junior hiring for three years while the model matures and you create a talent hollow: an inverted pyramid where nobody is coming up behind your senior engineers.

This requires deliberate design. Apprenticeship rotations through capability units. Onboarding tracks in the software factory itself. Cross-domain exposure programmes. You have to build the pipeline that the old model provided passively through large teams and pair programming.

Ignore this and in five years you will be desperately trying to hire seniors that the industry stopped producing.

Start here

If this resonates, do not reorganise your entire engineering department. Here is the sequence:

Read your current state honestly. Use the pattern cards or the AI-native assessment to get an accurate picture of where you are, not where you think you are.

Pick one team. Three or four people. One well-scoped product domain. The best backlog discipline in the organisation.

Build the factory with them. Two senior engineers spending 12 weeks co-delivering the MVP alongside the team. They need to own it when you leave.

Start upskilling the rest. Weekly cohorts, real challenges, dedicated support channels. Build toward train-the-trainers so enablement scales independently.

Measure what matters. Time from specification to production. Human hours per shipped feature. Defect rate on agent-generated code versus human-written code. These are the numbers that will make the case for rolling the factory out further.

The window for building this advantage is 12-18 months. The tools will only get better, but the organisations that build software factories now accumulate advantages in context, memory, institutional knowledge, and process maturity that late movers cannot shortcut.

The factory does not just produce software faster. It produces an organisation that gets better at producing software. Continuously. Automatically. And the gap it opens is hard to close from behind.

Your engineering org is a prompt. The software factory is the runtime.

If you are exploring what a software factory looks like for your engineering organisation, get in touch. We also offer agentic coding coaching and run in-house workshops with the Transformation Pattern Library.

Table of Contents

Why most enterprise AI pilots fail

What a software factory actually is

The architecture

Rolling it out: revolution and evolution

What "boring" gets right

The talent question

Start here

Continue Exploring

You Might Also Like

A Pattern Language for Transformation

Browse our interactive library of 119 transformation patterns. Each one describes a specific architectural problem and a tested way to solve it, so your team can talk about real tradeoffs instead of abstract ideas.