Your Engineering Org Is a Prompt Now

By Michael Mueller

Why the AI-native shift isn't about giving developers better tools. It's about making most of your org chart irrelevant.

We've been here before. A decade ago, Cloud Native forced a reckoning. Companies that treated containers and microservices as a technology upgrade got burned. The ones that understood it was an operating model shift, that it demanded new team structures, new processes, and new ways of thinking about infrastructure, pulled ahead.

AI Native is that same reckoning, but faster and more brutal. And this time, it's not your infrastructure that gets restructured. It's your people.

The uncomfortable math

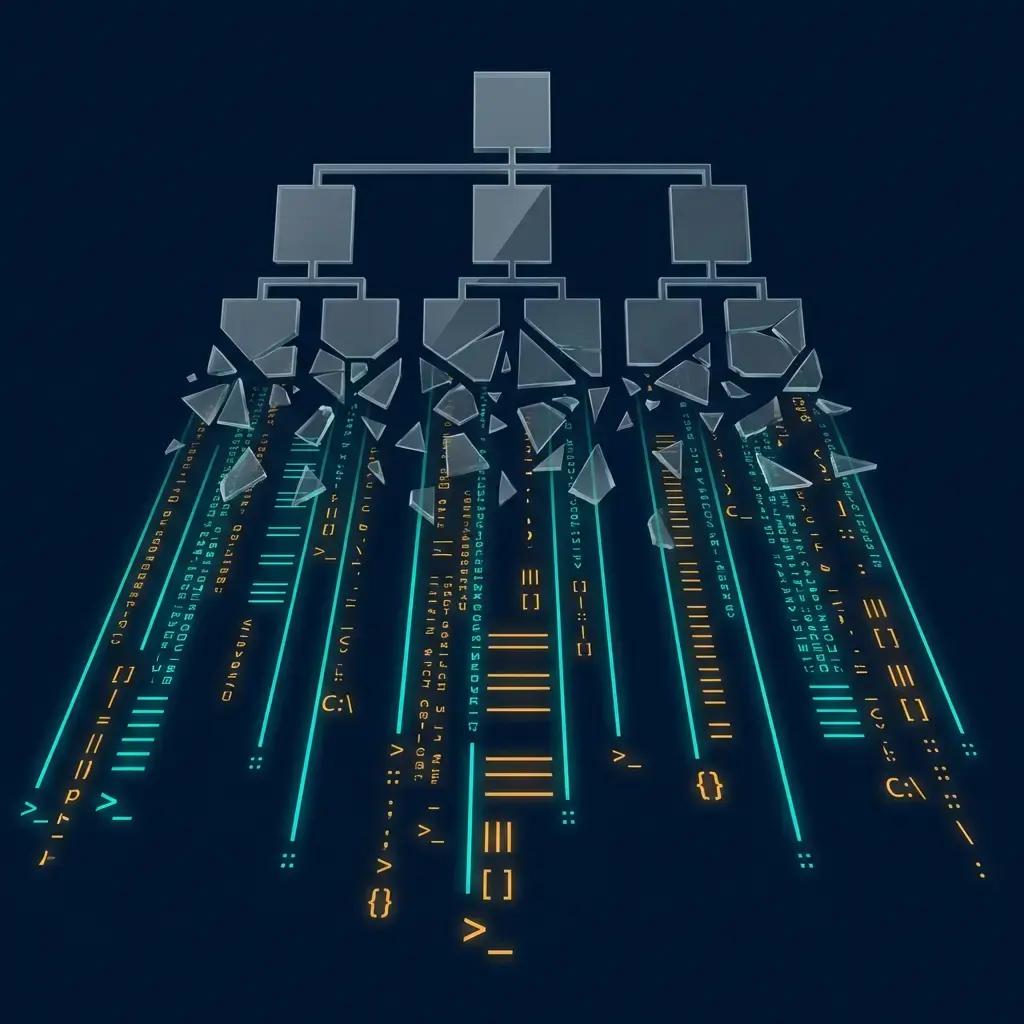

Take a typical engineering organisation. Say, 100 developers in 12-15 teams, each with a team lead, maybe a scrum master, a tech lead, an architect hovering above. Lots of coordination roles. Lots of people whose job is to decompose work, track progress, align priorities, and pass information between humans.

Now consider: OpenAI recently published a case study called Harness Engineering. A team of three engineers, scaling to seven, shipped a million lines of production code in five months. Zero manually-written code. All of it generated by Codex agents: application logic, tests, CI configuration, documentation, observability, internal tooling. The humans didn't write code. They designed environments, specified intent, and built feedback loops.

Three engineers. A million lines. Five months.

If that doesn't make you rethink your org chart, I don't know what will.

The shift nobody wants to talk about

When I work with engineering leaders on AI-native transformation, they invariably want to talk about tools. Which coding assistant? Which model? How do we measure productivity gains?

Those are the wrong questions. The right question is: what happens to your org structure when execution scales with compute instead of headcount?

Teams shrink from 8-10 to 3-4. You don't need a team lead for three people. Sprints become pointless when agents execute in hours, so scrum masters go with them. Golden paths encode technical direction, and some tech lead functions move to the platform. Specification engineers own architecture decisions within their domain, and the architect role fragments.

Run the numbers on your own org. Count the coordination roles. Count the people whose primary job is to pass information between other humans, track status, or decompose work that agents can decompose faster and more consistently.

That's the layer that's about to compress.

From Platform Teams to Agent Factories

If you're in the Cloud Native world, you already understand Platform Engineering. A central team builds the Internal Developer Platform (CI/CD, observability, service templates, self-service provisioning) so product teams don't reinvent infrastructure. Product teams consume what the platform provides.

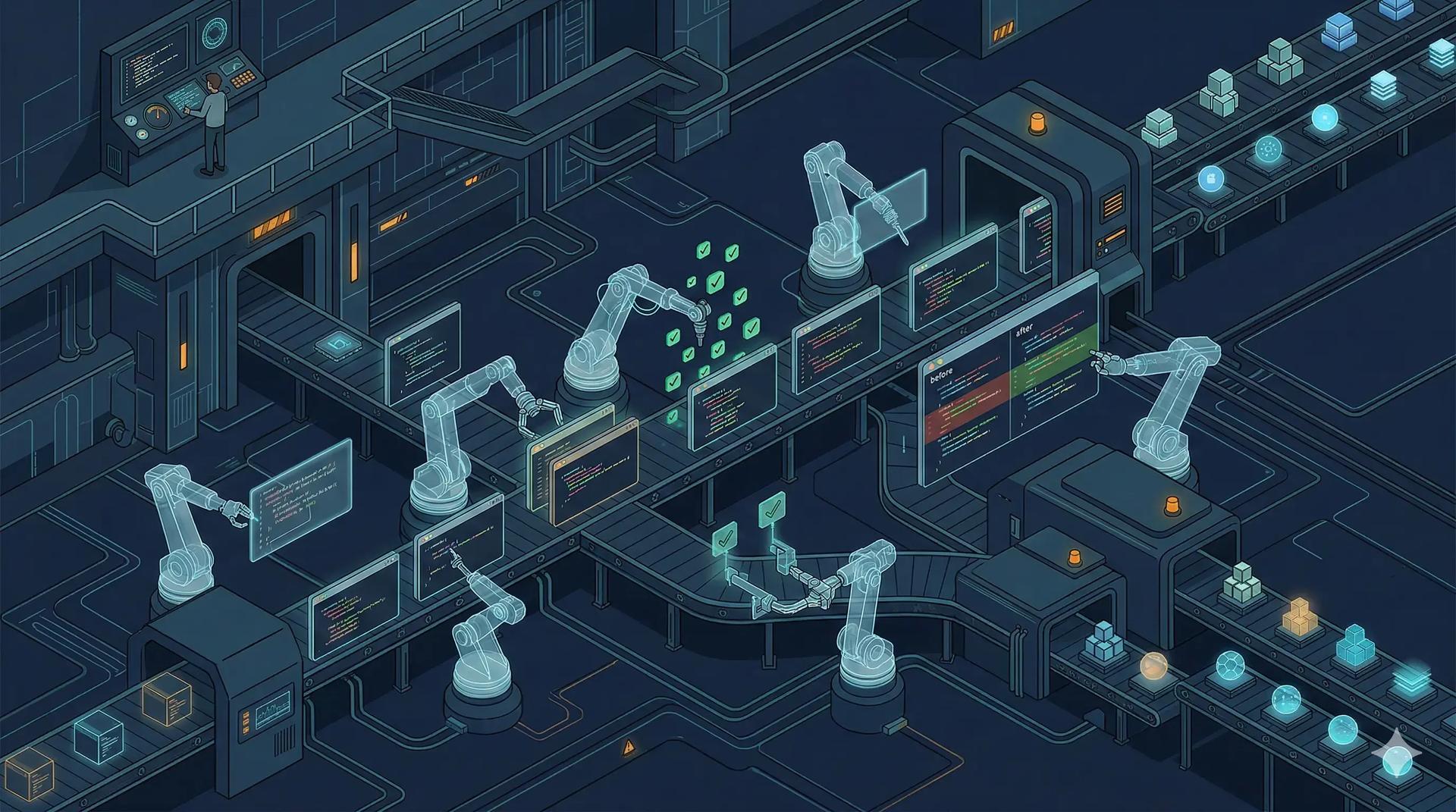

Agent Factories are the next evolution of that same idea.

Instead of building infrastructure abstractions, an Agent Factory builds, curates, and maintains the agents, prompt libraries, validation pipelines, and orchestration patterns that capability units consume to ship software. A team working on tenant onboarding doesn't build their own agent stack from scratch. They pull a pre-validated agent configuration from the factory, wire it into their domain context, and go.

This is the critical enablement layer. Without it, you're asking every 3-person team to independently figure out how to work with agents. With it, you're giving them a pre-built, battle-tested foundation, just like a good Internal Developer Platform does for infrastructure.

The same design principles apply too. Self-service with guardrails. The factory sets boundaries on what's safe to do, not what's allowed. If capability units have to wait for the factory team to approve every agent configuration, you've just recreated the bottleneck you were trying to eliminate.

We're building wave as our take on this. It lets you define multi-agent pipelines in YAML, version them in git, and run them with persona-scoped permissions. A navigator persona can explore but never modify. A craftsman can implement but not push to remote. An auditor can review but not fix. Infrastructure-as-Code thinking applied to AI workflows.

Context is the new code

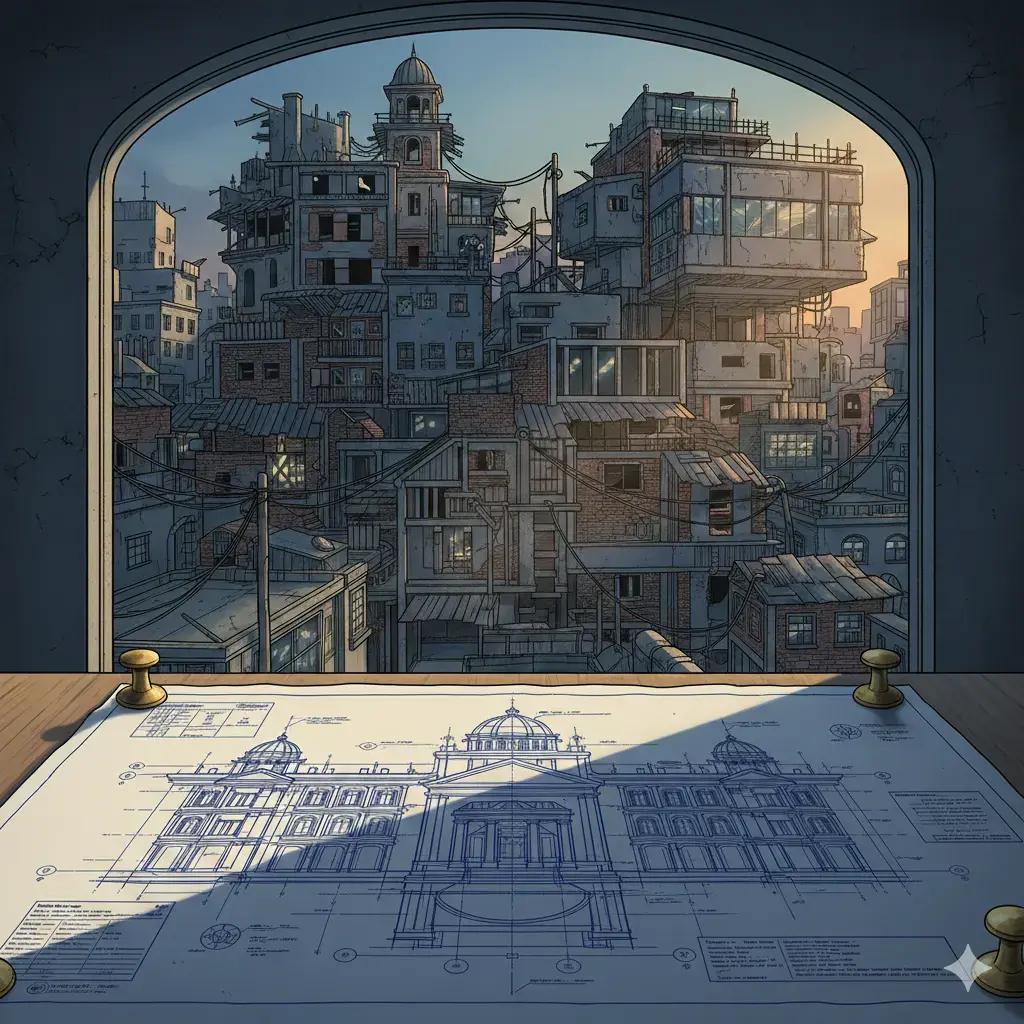

The OpenAI team learned something early that most organisations haven't figured out yet: agent output quality is directly proportional to context quality.

They tried the obvious approach first: a massive instruction file telling the agent everything it needed to know. It failed. Context is a scarce resource. A giant instruction file crowds out the actual task. Too much guidance becomes non-guidance. And it rots instantly.

Instead, they treated their instructions as a table of contents pointing to a structured knowledge base. Design docs, architecture decision records, API schemas, domain models. All version-controlled, all in-repo, all machine-readable.

This is the part that most AI-native transformations get wrong. They invest in agent tooling and orchestration infrastructure while neglecting the single biggest lever: the quality of the knowledge those agents consume.

From the agent's point of view, anything it can't access in-context doesn't exist. That architectural decision you aligned on in a Slack thread? Invisible. That domain model in someone's head? Invisible. That convention everyone "just knows"? Invisible.

If it's not in the repo, structured and current, it's not real. Context engineering, the discipline of maintaining that knowledge, isn't a nice-to-have. It's the core competency of the AI-native engineering org.

What "boring" gets right

Something counterintuitive: boring technologies are better for agent-driven development. Composable APIs, stable interfaces, well-documented libraries with deep representation in training data. These produce more predictable, higher-quality agent output.

The cutting-edge framework with the clever DSL? Agents struggle with it. The battle-tested, well-documented, "boring" alternative? Agents nail it consistently.

This matters for technology choices going forward. The evaluation criteria for your stack should include "agent legibility" alongside all the traditional considerations. In some cases, the OpenAI team actually reimplemented subsets of library functionality rather than fighting opaque upstream behaviour. That's a provocative architectural choice, but it makes sense when your primary "developer" is an agent that reasons better over explicit, self-contained code.

The governance question nobody's asking

When I talk to CTOs about agent-driven development, security and governance are usually an afterthought. That's backwards. When agents are generating and shipping code, you need a classification system: what auto-ships through the validation pipeline, what requires human review, and what's a hard stop.

For documentation updates and test additions? Auto-ship. For new API endpoints and schema changes? One human reviewer. For authentication changes, payment flows, or anything touching personal data? Validation architect and domain expert sign-off. No exceptions.

This isn't bureaucracy. It's the safety net that lets you move fast on everything else. And it needs to be enforced mechanically, through linters, structural tests, and CI gates, not through manual review checklists that everyone ignores under deadline pressure.

Part of this is traceability. When an agent generates code, you need to know why. We're building shift-log, an open-source tool that saves AI coding agent conversations as Git Notes attached to commits. Every code change keeps its reasoning in git history. It's early and evolving, but it's exactly the kind of tooling an Agent Factory should provide out of the box.

The talent hollow

There's a risk in the AI-native model that nobody in the thought-leadership circuit wants to acknowledge: what happens to junior engineers?

If your teams are 3-4 senior specification engineers working with agent fleets, where do juniors learn? You've just eliminated the entry-level rung of the career ladder. If you freeze junior hiring for three years while the model matures, you've created a talent hollow, an inverted pyramid where there's nobody coming up behind your senior engineers.

This requires deliberate design. Apprenticeship rotations through capability units. Structured onboarding tracks in the Agent Factory. Cross-domain exposure programmes. You have to actively build the pipeline that the old model provided passively through large teams and pair programming.

Ignore this and in five years you'll be desperately trying to hire seniors that the industry stopped producing.

Start with one team and one repo

If any of this resonates, don't reorganise your whole engineering department. Pick one team. Three or four people. One well-scoped product domain. Give them an Agent Factory, or build one with them. Set a constraint: humans specify and validate, agents execute. Measure what happens. Compare it to how the same scope would have been delivered under the old model.

The OpenAI team started with three engineers and an empty repository. The initial scaffold (repo structure, CI, formatting rules, package manager, even the agent instructions) was generated by agents. Everything that followed built on that foundation.

You don't need to bet the company. You need to run the experiment. But run it properly, with a real Agent Factory, real governance, real metrics. Not just handing a team a Copilot license and calling it transformation.

The meta-point

The era of Cloud Native gave us a blueprint: the organisations that won weren't the ones with the best Kubernetes clusters. They were the ones that understood the operating model shift and redesigned their teams, processes, and culture around it.

AI Native is the same pattern, one layer up. The organisations that will lead aren't the ones with the most sophisticated agent tooling. They're the ones that understand the operating model shift: from headcount-driven to specification-driven, from code-writing to environment-designing, from platform teams to agent factories.

Your engineering org is a prompt now. The question is whether you'll write it deliberately, or let it be written for you.

If you're exploring what the AI-native operating model looks like for your engineering organisation, get in touch.

Table of Contents

The uncomfortable math

The shift nobody wants to talk about

From Platform Teams to Agent Factories

Context is the new code

What "boring" gets right

The governance question nobody's asking

The talent hollow

Start with one team and one repo

The meta-point

Continue Exploring

You Might Also Like

A Pattern Language for Transformation

Browse our interactive library of 119 transformation patterns. Each one describes a specific architectural problem and a tested way to solve it, so your team can talk about real tradeoffs instead of abstract ideas.