The Autocompact Cliff: Why Your 200K Context Window Is Lying to You

By Michael Czechowski

You're 70% through a complex refactoring task. The conversation has been productive — Claude understands your architecture, remembers the edge cases you mentioned, knows exactly which files need changes. Then you send one more message and suddenly it's like talking to a stranger.

You hit the autocompact cliff.

The 200K Illusion

Claude's context window advertises 200,000 tokens. That sounds enormous — roughly 150,000 words, or about 500 pages of text.

Here's what your actual context allocation looks like:

| Segment | Tokens | Share |

|---|---|---|

| System prompt + tools | ~20k | 10% |

| Memory files | ~10k | 5% |

| Conversation | ~70k | 35% |

| Available space | ~56k | 28% |

| Autocompact buffer | ~45k | 22% |

That 22% autocompact buffer is not optional padding. It's a hard threshold. Once you cross it, the system triggers compression and summarization of your conversation history. The nuance and context that informed earlier decisions — gone.

Your actual working space before disaster strikes: approximately 55k tokens — not the theoretical 200k.

Epoch AI's analysis of 123 models shows context windows growing at roughly 30x per year since mid-2023 (Burnham & Adamczewski 2025). But raw capacity is not effective capacity. At 32,000 tokens, 11 tested models dropped below 50% of their short-context performance on tasks requiring latent association (Vodrahalli et al. 2025). The Chroma Research team coined a name for this: context rot — performance degrading non-uniformly as input grows, even on trivial tasks (Hong, Troynikov & Huber 2025).

The context window is agent working memory. Like human working memory, it has an effective capacity far smaller than its theoretical maximum. The "lost in the middle" effect — where models attend poorly to information that isn't near the beginning or end — means that a 200K window often functions like a much smaller one (Liu et al. 2023).

Autocompact: The Hidden Threshold

Autocompact exists for good reason: without it, conversations would simply fail once they exceeded the context limit. The system gracefully degrades by compressing older messages into summaries.

But "graceful degradation" is not free. Here's what you lose:

- Specific details: "Use the singleton pattern for DatabaseManager" becomes "discussed architecture patterns"

- Reasoning chains: Why you rejected approach A in favor of B disappears

- Edge cases: Tricky exceptions you mentioned get collapsed into generalities

- File relationships: The connection between

auth-middleware.tsandsession-manager.tsfades

Each autocompact cycle loses information. In long sessions, you might trigger multiple compressions, each one reducing fidelity. After 3–4 cycles, Claude may have only vague summaries of your project — while you assume it remembers everything.

The worst part: you often don't notice immediately. Claude will confidently continue the conversation, but its suggestions subtly drift from your actual requirements. You catch inconsistencies later, after you've already implemented the wrong approach.

Compaction also breaks prompt cache efficiency. Cache hits require exact prefix matches — the old prompt must be an exact prefix of the new prompt (Bolin 2026). Any change to earlier content invalidates the cache. When compaction rewrites your conversation history, every subsequent inference call is a cache miss.

Context Separation

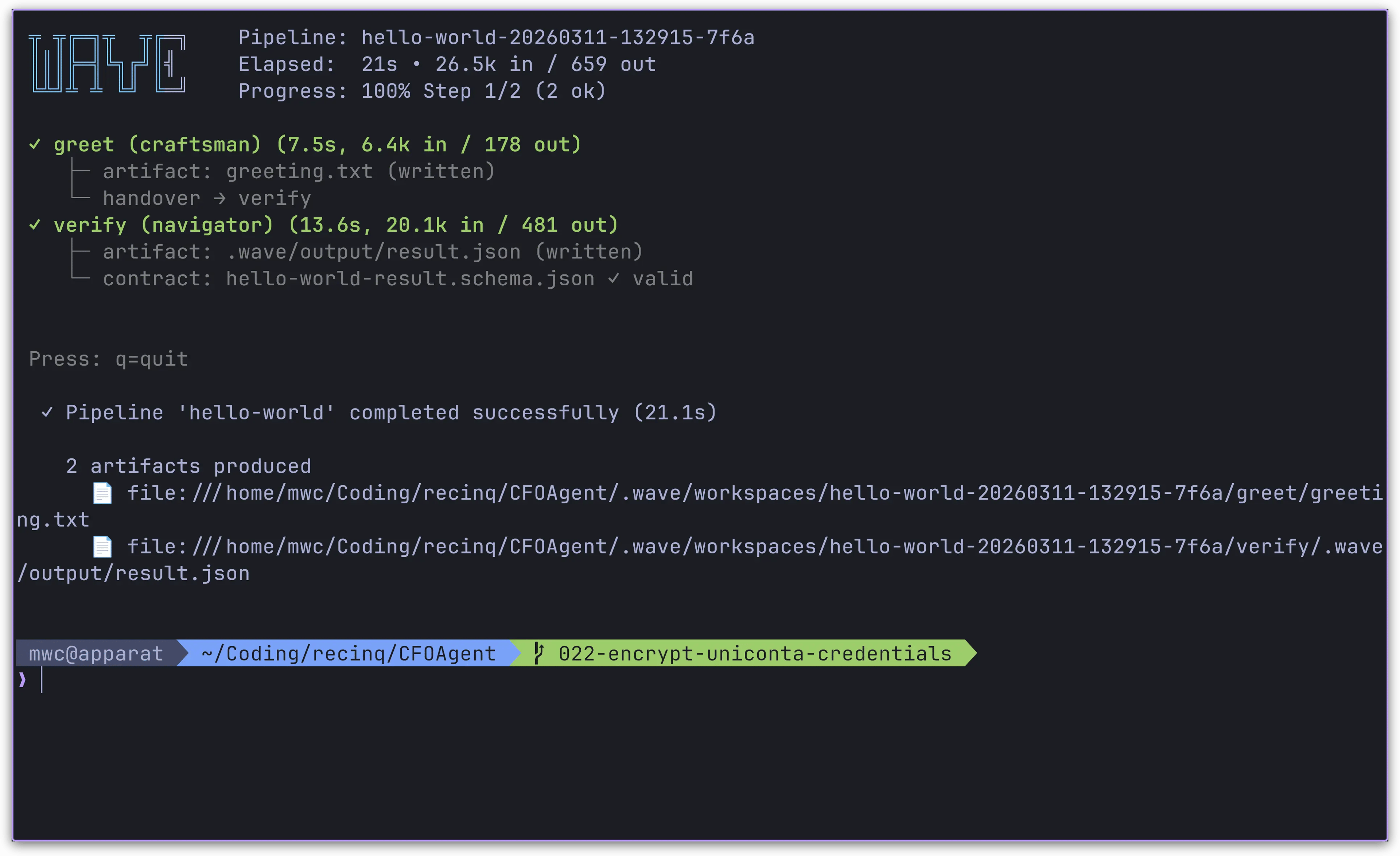

The solution is architectural: stop dumping everything into one context.

Separate concerns into layers:

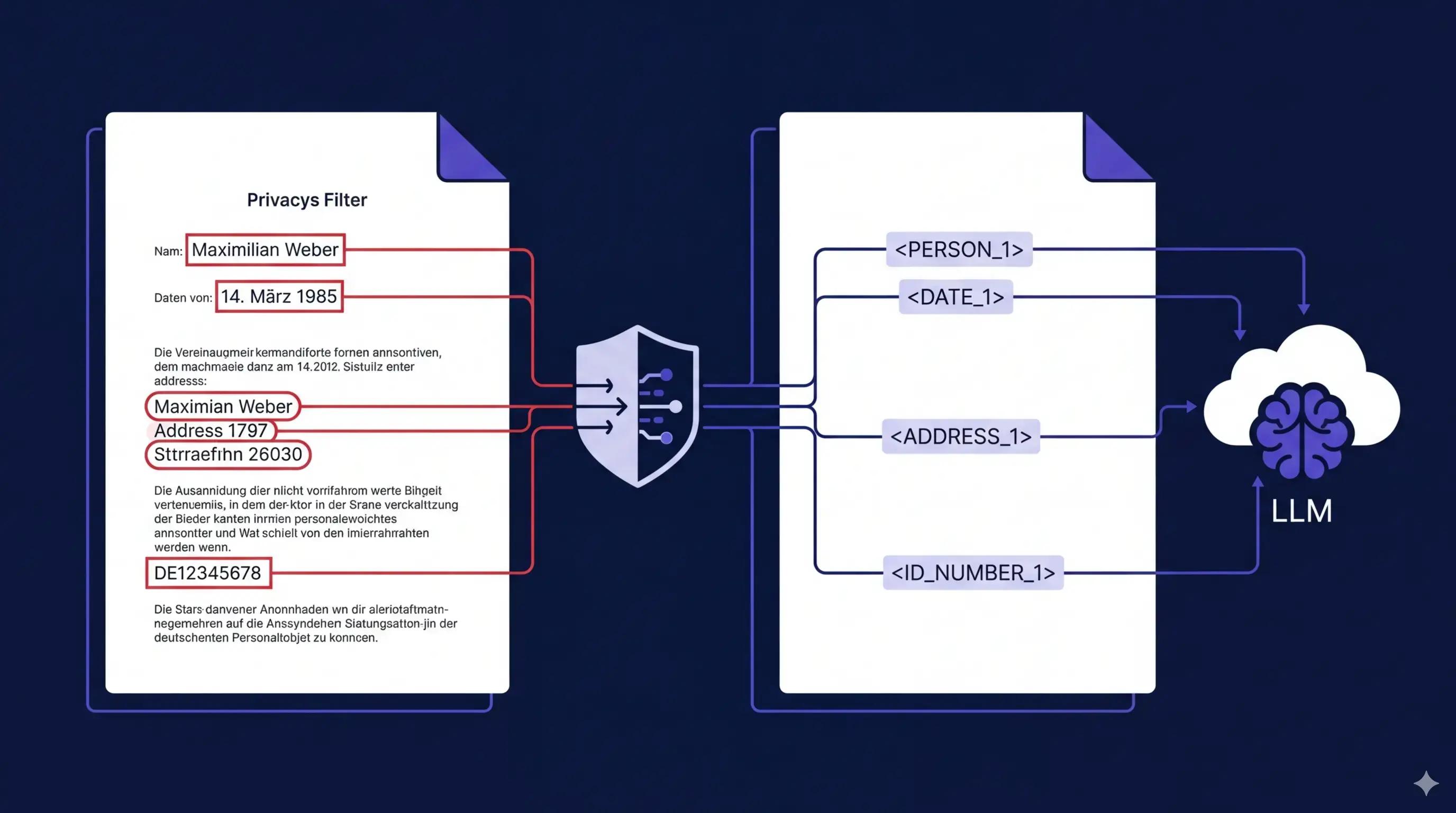

- System layer: Static instructions, tool definitions — front-loaded for cache hits

- Memory layer: Persistent state in external storage (files, databases) — loaded on demand

- Conversation layer: The actual back-and-forth — this is what you're budgeting

- Tool output layer: Results from file reads, searches, executions — the biggest consumer

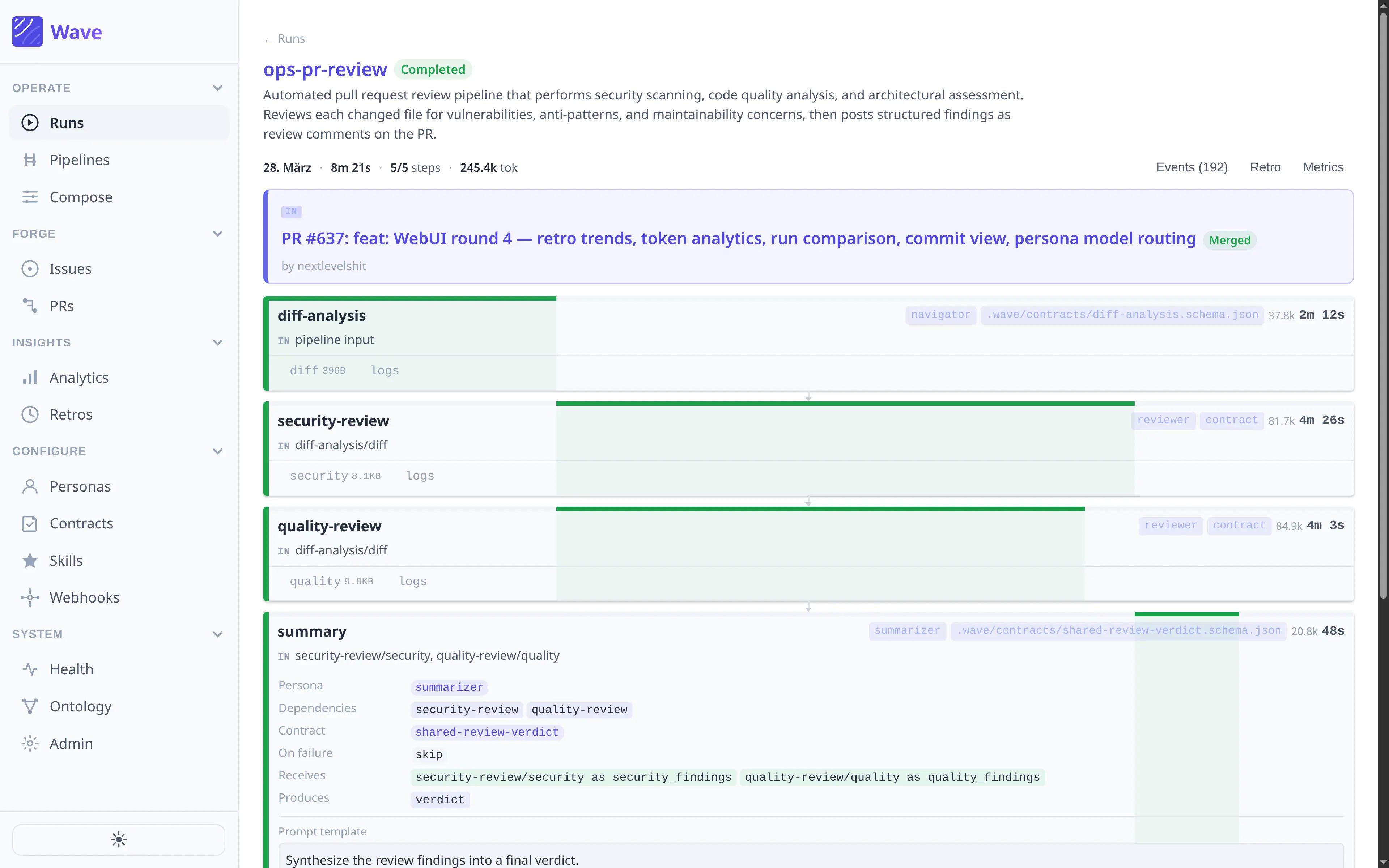

Background workers executing retrieval without consuming foreground tokens is the key pattern. The agent searches and indexes the codebase without eating into the conversation budget.

This maps to Anthropic's principle: find "the smallest set of high-signal tokens that maximize the likelihood of some desired outcome" (Rajasekaran et al. 2025).

The Discovery Problem

Context separation solves execution efficiency — how agents use context once they have it. But there's a separate problem: discovery efficiency — finding the right context to load in the first place.

Consider a typical scenario:

Task: "Add two-factor authentication to login flow"

Discovery process:

1. Semantic search identifies files mentioning "authentication", "login", "user"

2. AST analysis finds function signatures related to auth

3. Agent loads 15-20 files based on text/structure matching

Results:

• Files loaded: 18

• Token cost: 42k tokens

• Actually relevant: ~7 files (39% precision)

• Wasted: 11 files consuming tokens while contributing noise

Every irrelevant file you load moves you closer to the autocompact threshold. The question isn't "how do we pack more into context?" but "how do we ensure what we load is actually relevant?"

This is Dennett's frame problem applied to context management. R1D1 tried to reason about everything and ran out of time. An agent that loads every potentially relevant file runs out of tokens (Dennett 1984).

Sub-agent architectures address this: specialized agents handle focused tasks and return condensed summaries rather than raw output (Rajasekaran et al. 2025). The main context gets the answer, not the search process.

Audit Your Context Budget

Before optimizing, measure.

| Utilization | Status | Action |

|---|---|---|

| <50% | Healthy | Room for complex tasks |

| 50–60% | Watch | Monitor for long sessions |

| 60–70% | Caution | Consider splitting tasks |

| >70% | Danger | Autocompact imminent |

Goal: stay under 60% utilization to preserve conversation history and avoid autocompact.

Operational patterns that help:

- One clear goal per session — branch work across sessions, not requirements within sessions

- Extract repetitive context into Skills that load only when relevant — progressive disclosure rather than upfront loading

- Front-load static content (system instructions, tool definitions) for cache efficiency; append variable content at the end (Bolin 2026)

- Persist decisions in structured notes (

CLAUDE.md, memory files) that survive context resets

Skills and Progressive Disclosure

Stop copying the same context into every conversation. The Skills pattern loads documentation only when relevant.

The problem: teams maintain massive CLAUDE.md files with all their conventions, patterns, and examples. This loads into every session — even when you're just fixing a typo in a README.

The solution: extract domain-specific knowledge into Skills that load on demand. Auth patterns load when the task mentions authentication. Database conventions load when the task touches migrations. For everything else, those tokens stay available for actual work.

This is Karpathy's "just the right information" made operational: "the delicate art and science of filling the context window with just the right information for the next step" (Karpathy 2025).

Takeaway

Track your context usage like you track cloud spend. Stay under 60% utilization to preserve conversation continuity. Extract repetitive context into Skills that load only when needed. The context window is not how much the model can hold — it's how much it can effectively use.

Sources

- Burnham & Adamczewski 2025 — Context window growth rates

- Vodrahalli et al. 2025 — Effective context collapse at 32K tokens

- Hong, Troynikov & Huber 2025 — Context rot across 18 models

- Liu et al. 2023 — Lost in the middle effect

- Bolin 2026 — Prompt cache optimization in agent loops

- Rajasekaran et al. 2025 — Anthropic's context engineering guide

- Karpathy 2025 — Context engineering as the new discipline

- Dennett 1984 — The frame problem and bounded rationality

Table of Contents

The 200K Illusion

Autocompact: The Hidden Threshold

Context Separation

The Discovery Problem

Audit Your Context Budget

Skills and Progressive Disclosure

Takeaway

Continue Exploring

You Might Also Like

A Pattern Language for Transformation

Browse our interactive library of 119 transformation patterns. Each one describes a specific architectural problem and a tested way to solve it, so your team can talk about real tradeoffs instead of abstract ideas.