Wave: Bringing Determinism Back to AI-Assisted Development

One of the subtle but persistent frustrations with AI coding agents is that they're good — just not consistently good at everything at once.

Ask Claude to implement a complex Git workflow, and it'll pull it off brilliantly. But check the README afterwards and you might find it quietly mangled the documentation while it was at it. Prompt it on the mistake and it'll immediately say: "Of course — I'll fix that now." It's not that the model lacks the capability. It's that it can't focus its attention on all dimensions of quality simultaneously.

This is the problem Wave was built to solve.

The Attention Problem in Agentic Coding

When you work with a coding agent, there's an implicit expectation that "write good code" covers all of the following: it works, it's readable, it's reliable, it's performant, it's secure. But these are competing demands on the model's attention. In practice, the agent nails the thing you explicitly asked about and drops the ball on the things you assumed it would handle.

The workaround developers end up with is a manual checklist after the fact: "Now you've implemented it — can you check for security issues? Any duplicate code? Did you leave anything stale lying around?" It works, but it depends entirely on the developer remembering to ask.

What you actually want is a deterministic guarantee: certain checks will run, in a specific order, every time. Not "the model might decide to check security if it feels like it" — but a pipeline you can count on.

What Wave Is

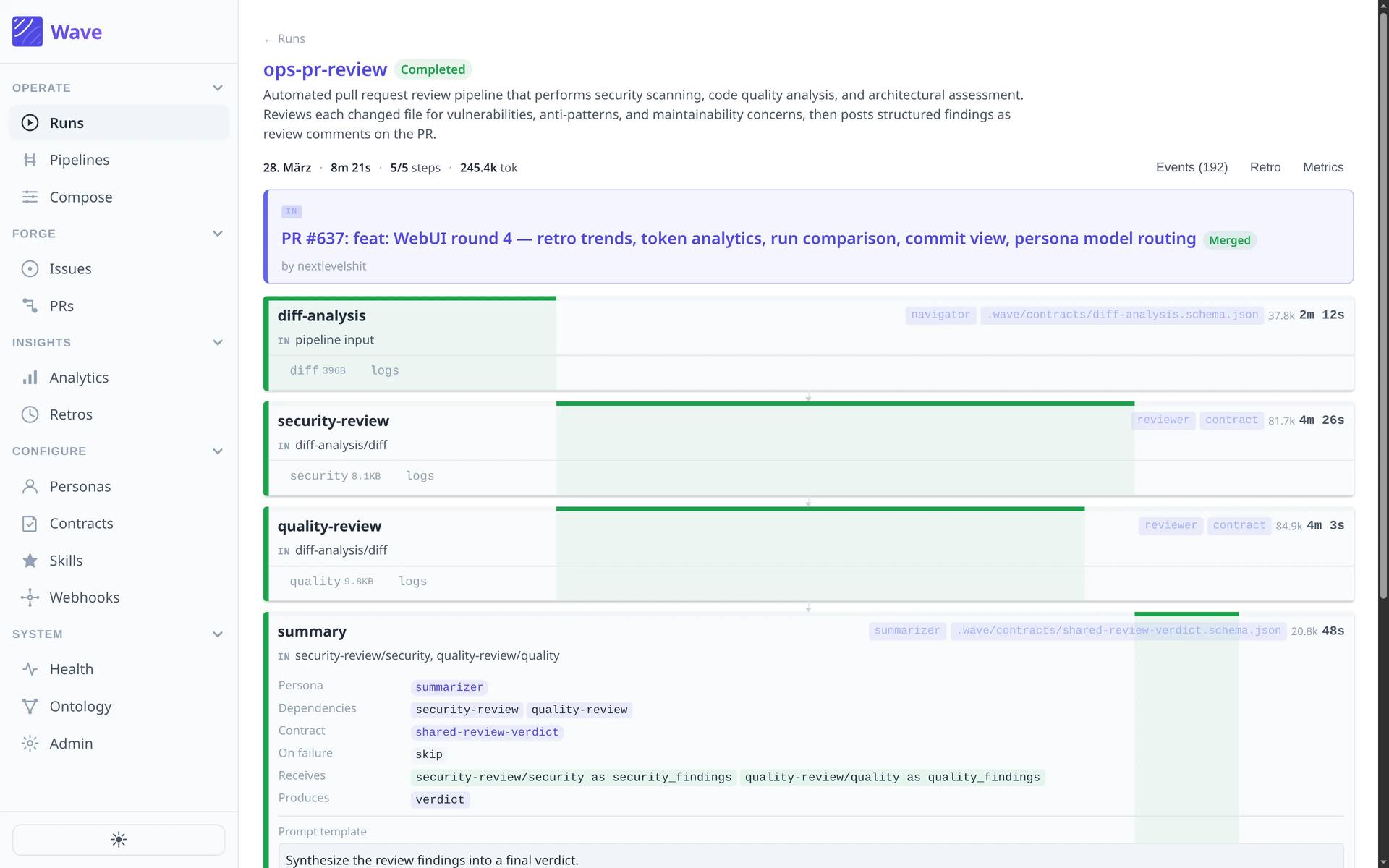

Wave is a CLI tool including a TUI and WebUI that lets you define these pipelines as simple YAML files. Each pipeline is a sequence of steps — a directed acyclic graph (DAG) — where each step has isolated context, a specific purpose, and a contract that defines what it must produce before the next step begins.

A pipeline for identifying and removing dead code might look like this:

- Scan — analyse the codebase and output a JSON artifact listing dead code candidates

- Verify — check that the artifact matches the expected schema

- Remove — use the artifact from step 2 to perform targeted deletions

- Audit — run a final quality pass simultaneously (security, quality, cost-efficiency etc.)

- Review — gather all information and create a GitHub issue, comment a PR or just create a simple markdown file

Each Claude Code instance runs in isolation inside a Git worktree — completely separated from your working directory. Running multiple pipelines at once is not a problem at all. And multiple steps can run in parallel where the graph allows it. The human reviews the output at the end, not at every intermediate step.

The contract mechanism is the key piece. Before a step hands off to the next, it validates that its output matches a defined schema. This is what makes the pipeline reliable: you're not trusting the model to "remember" what the previous step did — you're enforcing a handshake.

Not GasTown, Not Just Skills

Wave sits in an interesting middle ground. On one end of the agentic spectrum you have manual coding with Claude Code, where you're in the loop at every turn. On the other end you have full software factory tools like GasTown, which are powerful but come with a steep learning curve and a lot of concepts to internalise before you can do anything useful.

Wave is deliberately not that. The pipelines are just YAML. If you're familiar with GitHub Actions configuration, you'll feel at home. If you're not, you can still read a pipeline, understand what it does, and tweak it. Remove a step, add a tool call, change the order — it's designed to feel like tinkering in a garage, not waiting three hours for a laboratory process to complete.

It's also distinct from Claude Code skills. Skills are offered to the model as tools it can use; the model decides whether to invoke them. Wave enforces the order in which things happen, deterministically. The two are complementary — you can invoke skills from inside a Wave pipeline.

Wave ships with built-in pipelines out of the box: changelog generation, comprehensive code review, dead code identification, documentation gap detection, feature implementation, and more. There's also a web UI for teams who want something less terminal-facing.

Built with Claude, on Claude

Perhaps the most fitting thing about Wave is how it was developed. Michael started with documentation — writing out the full spec for how the tool should work before a single line of implementation existed. Claude Code then built a working dummy from that documentation, with authentic-looking output. From there, Claude drove the implementation — using Wave to develop Wave further.

By the end, roughly 95% of the code was written by the model. The developer handled the Nix/sandbox configuration and judgment calls that genuinely required human oversight. Everything else was AI-authored.

This is spec-driven development taken seriously: write what you want, validate that the dummy behaves as documented, then let the agent implement it.

Where This Fits

Wave is being released as open source. It's not trying to be the definitive software factory. The goal is to fill the gap between "I'm prompting Claude manually and hoping for the best" and "I've committed fully to a complex multi-agent orchestration framework with a steep learning curve."

The documentation is live. It runs on Linux, macOS, and in sandbox environments (via Nix/Flake) for the security-conscious. CI/CD integration is on the roadmap.

If you're spending time re-prompting agents to fix things they should have caught the first time, this is probably worth ten minutes of your day.

Table of Contents

The Attention Problem in Agentic Coding

What Wave Is

Not GasTown, Not Just Skills

Built with Claude, on Claude

Where This Fits

Continue Exploring

You Might Also Like

A Pattern Language for Transformation

Browse our interactive library of 119 transformation patterns. Each one describes a specific architectural problem and a tested way to solve it, so your team can talk about real tradeoffs instead of abstract ideas.