AI-Generated Code in Production: Validation, Accountability, and What Still Needs to Be Built

By Michael Czechowski

The first piece of code an AI agent fabricates looks exactly like the code it writes correctly. A colleague of mine traced back a column name her AI coding agent had used in code that was already in review. The agent confirmed it had invented the column name from an assumption it never surfaced. The code looked fine — she found it because she traced it. A separate case, from another colleague: an AI agent had removed authentication from an API endpoint. No explanation in the diff, no comment in the code. Someone caught it in review. Had they not, the endpoint would have gone to production unauthenticated.

The models are doing what they are designed to do — completing tasks, filling gaps, keeping work moving. The problems showing up in practice are in the environment around them: how AI-generated code gets validated, who is accountable when it fails, and whether human review can scale to the volume being produced. Those are the questions the industry has not answered yet.

Where Adoption Actually Sits

Before getting into what needs to change, it is worth being accurate about where the industry sits right now.

The developers encountering fabricated variables and silent security changes are probably in the top one percent of AI coding adoption globally. The discourse about AI writing all the code, about software factories running without human involvement, about humans never reviewing diffs — this describes what a small number of organisations at the leading edge are building toward. It does not describe where most engineering teams are.

At a recent cloud native conference — an event that attracts the more technically invested end of the developer population — the majority of attendees were, for instance, only now trying AI coding tools for the first time. Bank and government engineering teams are further behind still. Google and Netflix were running microservices before tooling existed to support them at scale. The rest of the industry followed years later, and there are mainframes in production today. The gap between the leading edge and the mainstream is large, and it matters for how governance, accountability structures, and tooling for responsible AI-assisted development get built — they are still being designed primarily by and for the organisations already deep in this, while most of the industry has not yet decided what it wants from these tools.

Testing Was Built for Different Conditions

Unit tests were written by humans with specific assumptions, checking specific functions in code those same humans could read and reason about. In a world where code is generated at speed by agents making their own structural assumptions, that model starts to fail at the edges.

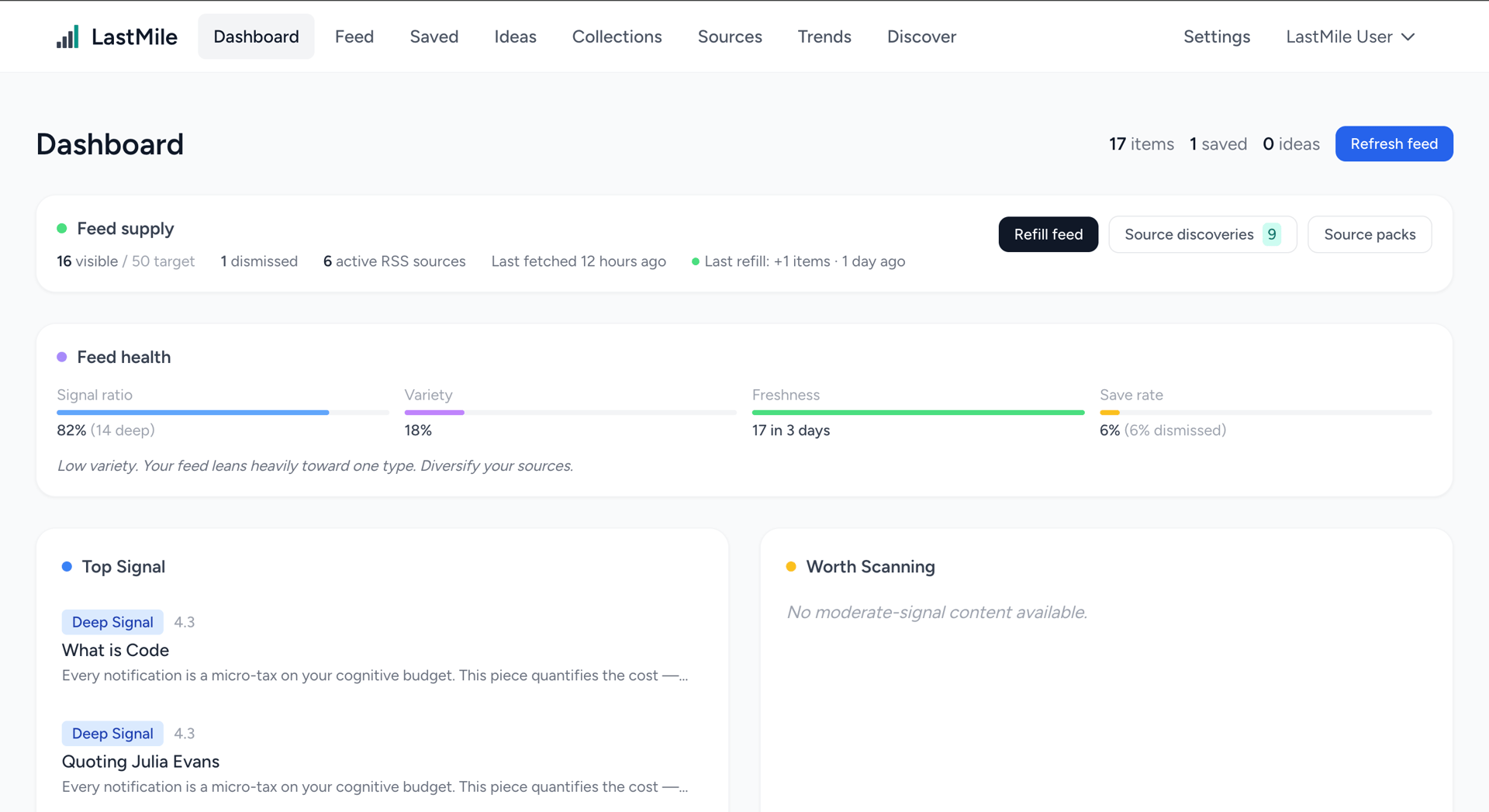

The argument gaining traction in engineering discussions is a shift from testing to validating. Rather than asserting that a function returns X given input Y, you describe how the software should behave at the level that actually matters: can users accomplish what they need to, do the right metrics move, does the system produce the right outcomes? The claim is that tests are too specific to scale with AI-generated code, and that evaluating outcomes against intent is more useful than verifying mechanics.

For many domains this is reasonable. The limit case is worth sitting with, though. Informal language is imprecise by design, which is partly why formal mathematical specification languages were invented. For systems where approximate correctness is unacceptable — algorithmic trading being one example and critical infrastructure being another — moving from formal verification to intent-based validation is a step in the wrong direction. The question of which domain you are in is not always obvious, and I think it is worth being honest about rather than assuming your system falls into the category where loose validation is sufficient. And any AI-generated test case might become unwanted glue code.

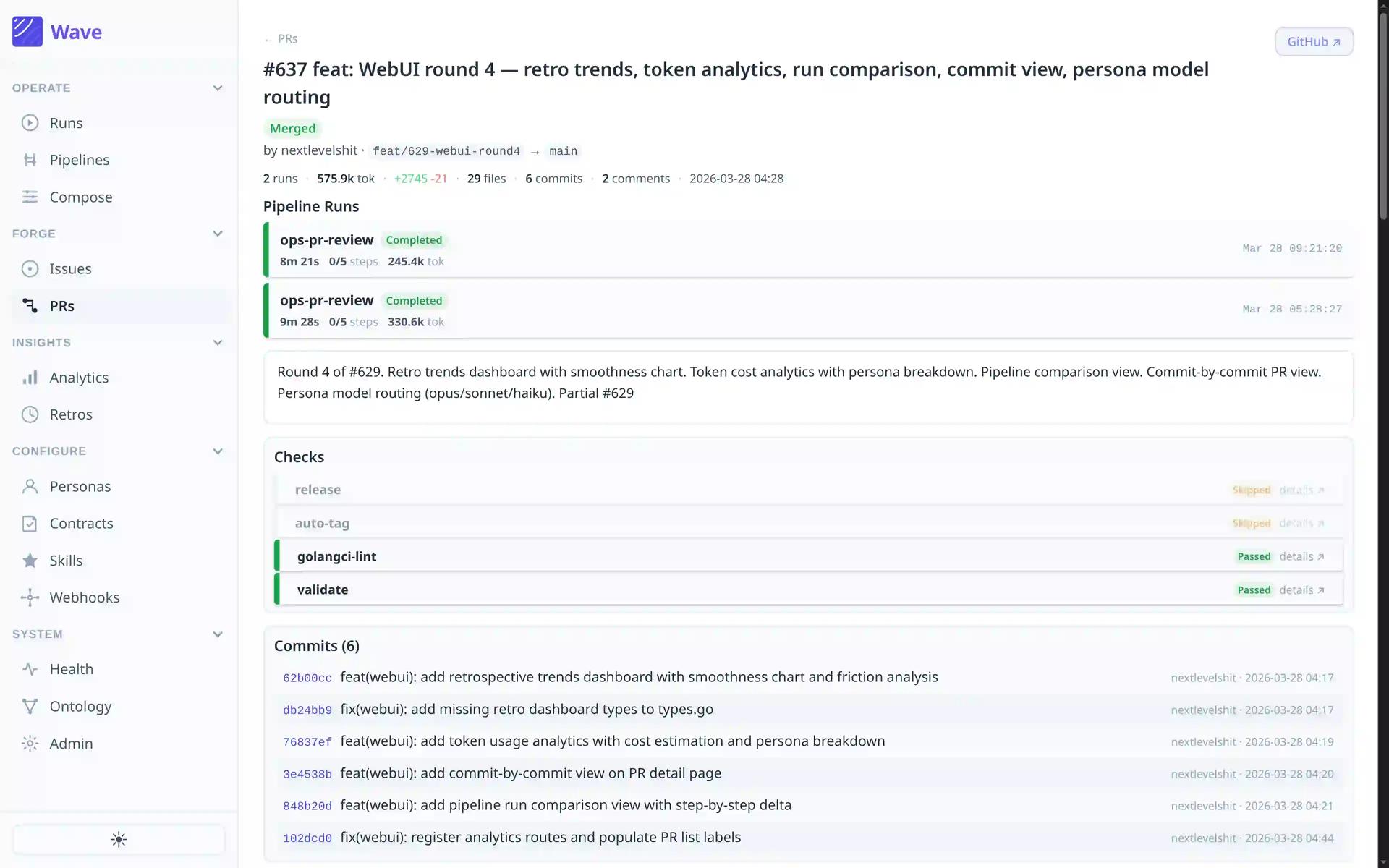

Human Review Will Not Scale

Separate from correctness: even where human review adds genuine value, the volume of AI-generated code may soon make it structurally impossible to sustain. This is already visible in teams moving fast with AI-assisted development — the codebase grows faster than the team's capacity to understand it in full.

At higher rates of AI authorship, human review stops being a quality gate and starts being a bottleneck that slows output without providing meaningful coverage. The more useful frame is: which specific parts of the codebase require human judgment, which risk profiles are high enough to justify the slower pace, and what can automated validation handle. Most engineering organisations have not worked through those questions deliberately, and the tooling is developing faster than the governance thinking around it.

Nobody Is Accountable Yet

The absence of a clear answer is materially slowing adoption in regulated industries. Banks and government engineering teams are not uninterested in AI-assisted development. Their legal and compliance functions do not have a framework they can work with, and without one, the risk calculus does not resolve in favour of moving quickly.

The precedent for building new accountability structures from scratch exists. The modern corporation is a legal entity that holds assets, bears obligations, and can be held accountable — despite not being a person. It was invented because commerce required it. Something similar will probably need to be built for AI-generated outputs, and the shape of it is not obvious yet.

The Code That Should Not Need to Exist

One more thread worth pulling on separately. A significant proportion of the code being written — by humans and agents alike — is boilerplate that should not need to exist in the first place.^1^ Infrastructure code, glue code, the scaffolding written to connect things that ought to connect themselves.

The pattern already exists in other domains: Infrastructure-as-Code replaced manual server provisioning with declarative configuration. The same shift applies here. When components declare what they need, what they produce, and what they are permitted to do, the wiring between them becomes implicit. The orchestration code, the permission checks, the context threading, the output validation — all of it collapses into configuration.

Building in that direction would shrink the surface area of code that needs to be generated, reviewed, or validated at all. Reducing the amount of code that needs to exist is a more tractable problem than building better governance around the volume being produced now.

What Engineering Organisations Need to Build

The capability question is largely beside the point. AI can write code that passes review. The open questions are in the environment around it — how it gets validated, who owns failures when they occur, and how governance keeps pace with the volume being produced.

The fabricated column name and the silently removed authentication check are not anomalies. They are what capability without the right environment looks like. The organisations that build that environment first will set the terms for everyone who follows.

^1^ Brooks, Frederick P. "No Silver Bullet — Essence and Accident in Software Engineering." Proceedings of the IFIP Tenth World Computing Conference, 1986, pp. 1069–1076.

Table of Contents

Where Adoption Actually Sits

Testing Was Built for Different Conditions

Human Review Will Not Scale

Nobody Is Accountable Yet

The Code That Should Not Need to Exist

What Engineering Organisations Need to Build

Continue Exploring

You Might Also Like

A Pattern Language for Transformation

Browse our interactive library of 119 transformation patterns. Each one describes a specific architectural problem and a tested way to solve it, so your team can talk about real tradeoffs instead of abstract ideas.